Self-Coded Integrations

How Zenera agents write, debug, version, and evolve their own integration code — a runtime-generated approach to enterprise connectivity.

Design Principles

Zenera's integration architecture is built on five axioms that collectively define a system where integration logic is a runtime-generated artifact rather than a pre-built dependency.

Axiom 1

Integration Is Code, Not Configuration.

Every connection between an agent and an external system is executable code — Python scripts, shell commands, or composite pipelines — not a declarative tool definition. This means integrations can express arbitrary logic: conditionals, loops, retries, data transformations, multi-step transactions, and error recovery that protocol-driven approaches (MCP, GraphQL Federation) cannot represent.

Axiom 2

Authentication Is Infrastructure, Not Application Logic.

Credential management — OAuth2 flows, token refresh, certificate rotation, API key vaulting — is handled by a dedicated abstraction layer and never appears in skill code. Agents request tokens by provider name. The infrastructure handles the rest.

Axiom 3

Skills Are Versioned Artifacts With Provenance.

Every skill is an immutable, versioned object stored in the platform's transactional storage (LakeFS). Skills have lineage: who created them (agent or human), when, from what API documentation, with what test results. Rollback to any previous version is atomic.

Axiom 4

Discovery Is Semantic, Not Enumerative.

Agents find skills through natural language search over descriptions, not by scanning a flat registry of tool schemas. Only the matched skill's interface enters the context window. Implementation code never pollutes the LLM's context.

Axiom 5

The System Self-Extends.

When an agent encounters a capability gap — a system it cannot reach, an operation no existing skill covers — it generates a new skill, tests it, and persists it. The platform's integration surface grows as a direct consequence of agents performing their work.

Skill Data Model

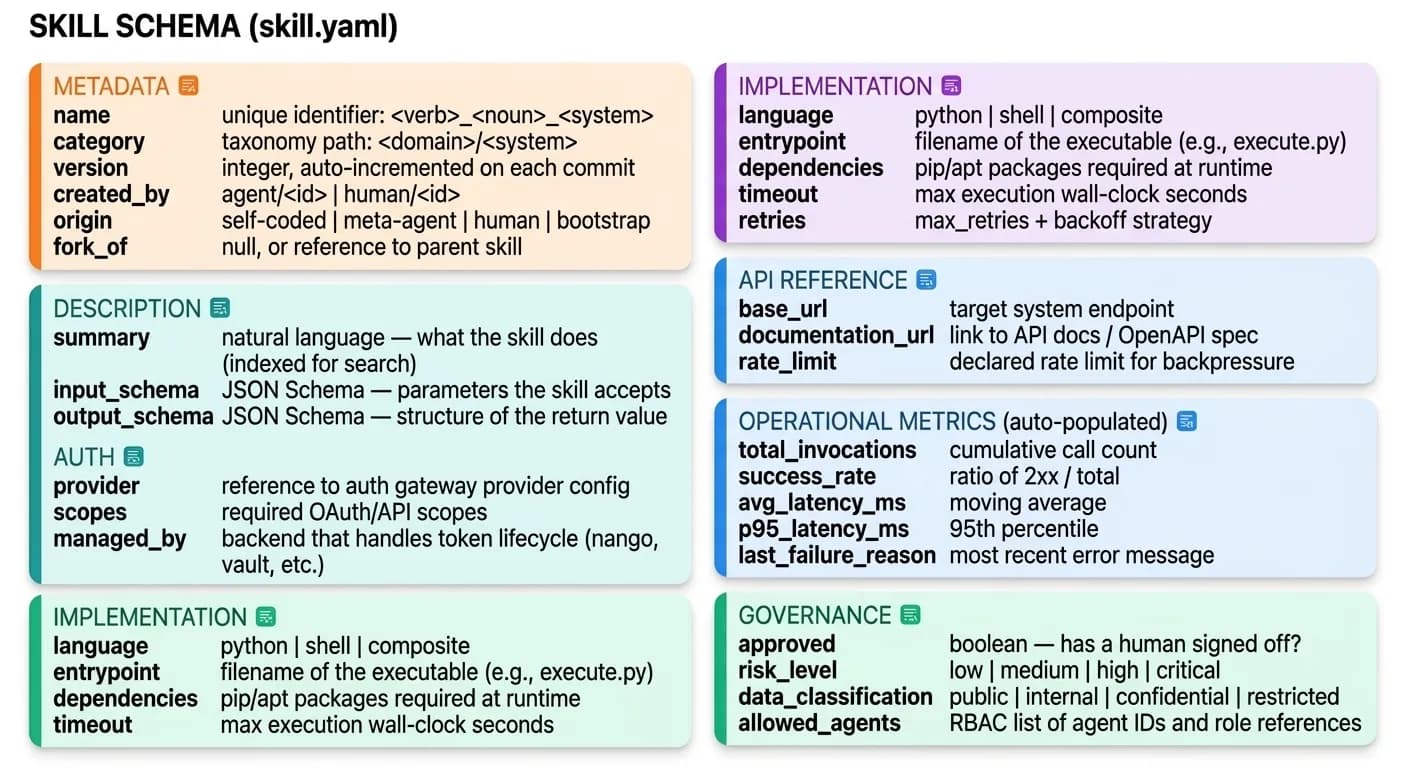

A skill is the atomic unit of integration capability in Zenera. It is a structured artifact stored in LakeFS with a precise schema.

2.1 Skill Anatomy

A skill is composed of seven top-level sections. Each section maps to a distinct concern — identity, semantics, security, implementation, provenance, runtime health, and governance:

2.2 Skill Directory Structure

Each skill is a directory within LakeFS containing metadata, code, tests, and documentation:

2.3 Skill Types

Skills exist in four forms, representing different stages of maturity:

| Type | Description | Code | Tests | Metrics | Origin |

|---|---|---|---|---|---|

| Bootstrap | Yes | No | No | No | Human-configured |

| Self-Coded | Yes | Yes | Auto-generated | Accumulating | Runtime agent |

| Meta-Agent Generated | Yes | Yes | Yes | Pre-seeded | Design-time Meta-Agent |

| Promoted | Yes | Yes (reviewed) | Yes (reviewed) | Comprehensive | Human-approved from any source |

Bootstrap skills are seeds. Self-coded skills are first drafts. Meta-Agent generated skills are pre-tested. Promoted skills are production-hardened. All share the same schema and are discoverable through the same mechanism.

Authentication Abstraction Layer

The authentication layer decouples credential management from skill execution through a dedicated service that mediates all external system access.

3.1 Architecture

Multiple agents share a single auth gateway. The gateway validates identity, enforces RBAC, resolves tokens from provider backends, and logs every grant — without exposing any secret to the calling agent:

Invariants

- Agents NEVER see raw client secrets, private keys, or refresh tokens

- Bearer tokens are short-lived (typically 5–60 min) and scoped to the request

- All token grants are logged with agent ID, provider, scopes, timestamp

- Token refresh happens transparently — agents are never aware of expiry

- Multi-tenant: same provider can serve different credentials per team/environment

3.2 Provider Configuration

Providers are configured once by platform administrators and stored in the auth backend. The example below shows a SAP S/4HANA production provider using OAuth2 client credentials via Nango:

# providers/sap-s4hana-prod.yaml

provider:

name: sap-s4hana-prod

display_name: "SAP S/4HANA Production"

backend: nango # nango | vault | aws-secrets | custom

connection:

type: oauth2_client_credentials

token_url: "https://auth.sap.com/oauth2/token"

scopes:

- "API_BUSINESS_PARTNER_0001"

- "API_PURCHASING_ORDER_SRV"

audience: "https://api.sap.com"

nango_config:

integration_id: sap-s4hana

connection_id: prod-instance-01

lifecycle:

token_ttl: 3600 # seconds

refresh_before_expiry: 300 # refresh 5 min before expiry

max_refresh_retries: 3

fallback_on_refresh_failure: error # error | cached_token | alert_and_error

governance:

data_classification: confidential

allowed_roles:

- role/erp-agents

- role/finance-agents

rate_limit: 100/minute

audit_retention_days: 2555 # 7 years for SOX compliance3.3 Agent-Side Interface

From the agent's perspective, the entire authentication system collapses to a small, stable API:

from zenera.auth import get_token, get_connection_info

# Simple token acquisition — handles OAuth2, refresh, caching transparently

token = get_token("sap-s4hana-prod")

# Use in HTTP request

response = requests.get(

f"{base_url}/sap/opu/odata/sap/API_BUSINESS_PARTNER",

headers={

"Authorization": f"Bearer {token}",

"Accept": "application/json"

}

)

# For providers that require more than a bearer token

# (e.g., HMAC signing, custom headers)

conn = get_connection_info("custom-legacy-erp")

# conn.base_url, conn.headers, conn.auth_params — all resolved"Self-coded skills inherit this interface automatically. When an agent generates new integration code, it uses the same get_token() call. No OAuth implementation. No certificate handling. No token refresh logic. The skill code is pure business logic."

Self-Coding Pipeline

The self-coding pipeline is triggered when an agent identifies a task that requires external system access for which no adequate skill exists.

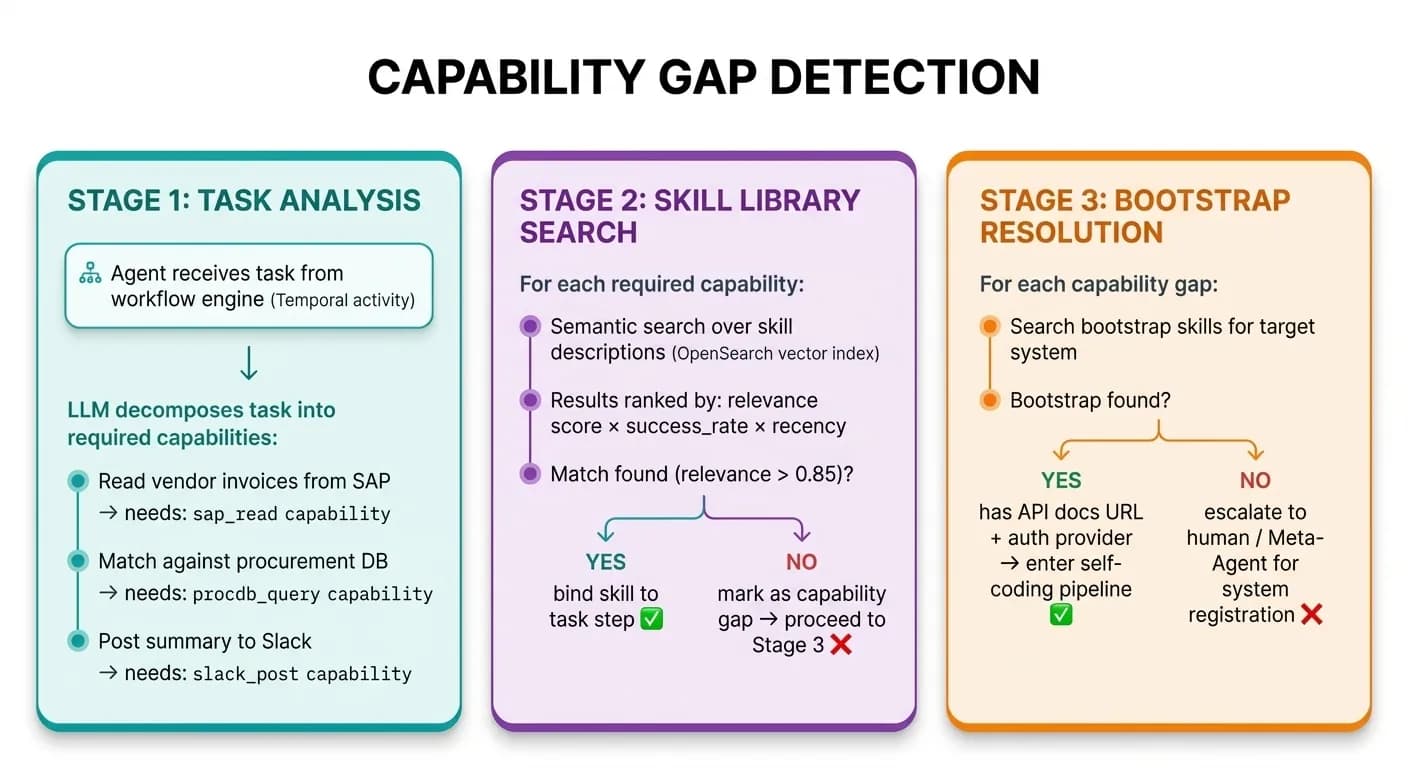

4.1 Trigger: Capability Gap Detection

The detection logic operates in three stages — task analysis, skill library search, and bootstrap resolution:

Stage 1

Task Analysis

The agent receives a task from the workflow engine (Temporal activity). An LLM decomposes the task into required capabilities — for example, sap_read, procdb_query, and slack_post.

Stage 2

Skill Library Search

For each required capability, the platform runs a semantic search over skill descriptions ranked by relevance × success_rate × recency. If relevance exceeds 0.85, the skill is bound to the task step.

Stage 3

Bootstrap Resolution

When no matching skill is found, the agent searches bootstrap skills for the target system. If a bootstrap exists with API docs and an auth provider, the self-coding pipeline begins. Otherwise, the gap is escalated to a human or the Meta-Agent.

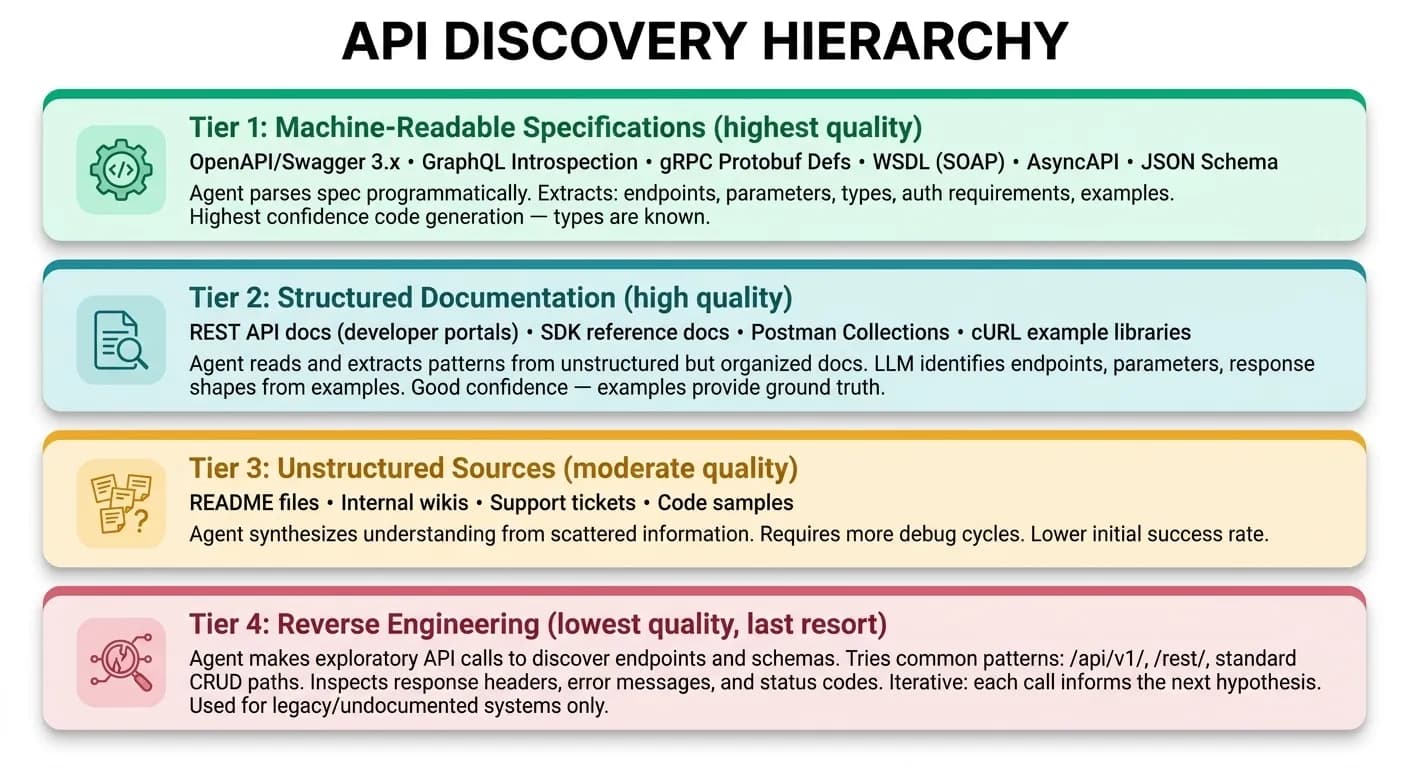

4.2 API Discovery and Comprehension

Once a bootstrap skill is identified, the agent enters the API discovery phase. This phase supports a hierarchy of documentation sources, from most structured to least:

The agent does not attempt to understand the entire API surface. It uses the task description to focus on only the endpoints required for the current operation. A typical enterprise API exposes 150–500 operations; the agent may need 2–5 of them. This is fundamental to avoiding the context window explosion that plagues MCP registries.

4.3 Code Generation

The agent generates integration code in a constrained code synthesis session. The generation prompt includes:

- 1Task description — what the skill needs to accomplish

- 2API reference — extracted endpoint specifications, parameter types, response schemas

- 3Auth reference — the provider name from the bootstrap skill (not credentials)

- 4Skill template — the standard execute() function signature and error handling pattern

- 5Platform libraries — available imports: zenera.auth, zenera.logging; file I/O uses standard POSIX paths (/data/*) — the transactional runtime makes storage transparent

Example generated skill

# Generated skill: execute.py

# Skill: fetch_vendor_invoices_sap v1

# Generated by: agent/supply-chain-monitor

# Source: OpenAPI spec at https://api.sap.com/api/API_BUSINESS_PARTNER/resource

# Timestamp: 2026-03-08T14:22:00Z

import requests

from datetime import datetime

from zenera.auth import get_token

from zenera.logging import skill_logger

logger = skill_logger(__name__)

def execute(params: dict, context: dict) -> dict:

"""

Retrieves vendor invoices from SAP S/4HANA.

Handles pagination automatically. Normalizes currency.

Args:

params: {date_from, date_to, vendor_ids?, currency?}

context: {run_id, agent_id, workflow_id}

Returns:

{invoices: [...], total_count: int, pagination_complete: bool}

"""

token = get_token("sap-s4hana-prod")

base_url = "https://myinstance.s4hana.cloud.sap"

target_currency = params.get("currency", "USD")

all_invoices = []

skip = 0

page_size = 100

while True:

query_params = {

"$filter": (

f"PostingDate ge datetime'{params['date_from']}T00:00:00' "

f"and PostingDate le datetime'{params['date_to']}T23:59:59'"

),

"$top": page_size,

"$skip": skip,

"$select": "InvoiceID,VendorID,VendorName,Amount,Currency,PostingDate,LineItems",

"$expand": "LineItems"

}

if params.get("vendor_ids"):

vendor_filter = " or ".join(

f"VendorID eq '{vid}'" for vid in params["vendor_ids"]

)

query_params["$filter"] += f" and ({vendor_filter})"

logger.info(f"Fetching page at skip={skip}", extra={"run_id": context["run_id"]})

response = requests.get(

f"{base_url}/sap/opu/odata/sap/API_BUSINESS_PARTNER/A_VendorInvoice",

params=query_params,

headers={

"Authorization": f"Bearer {token}",

"Accept": "application/json",

"sap-language": "en"

},

timeout=30

)

response.raise_for_status()

data = response.json()

results = data.get("d", {}).get("results", [])

for inv in results:

normalized = {

"invoice_id": inv["InvoiceID"],

"vendor_id": inv["VendorID"],

"vendor_name": inv["VendorName"],

"amount": float(inv["Amount"]),

"currency": target_currency,

"date": inv["PostingDate"],

"line_items": [

{

"description": li.get("Description", ""),

"amount": float(li.get("Amount", 0)),

"quantity": int(li.get("Quantity", 1))

}

for li in inv.get("LineItems", {}).get("results", [])

]

}

if inv["Currency"] != target_currency:

normalized["amount"] = _convert_currency(

normalized["amount"], inv["Currency"], target_currency

)

all_invoices.append(normalized)

if len(results) < page_size:

break

skip += page_size

if skip > 1_000_000:

logger.warning("Pagination safety limit reached", extra={"skip": skip})

break

return {

"invoices": all_invoices,

"total_count": len(all_invoices),

"pagination_complete": True

}

def _convert_currency(amount: float, from_currency: str, to_currency: str) -> float:

"""Simple currency conversion using SAP exchange rates."""

token = get_token("sap-s4hana-prod")

resp = requests.get(

f"https://myinstance.s4hana.cloud.sap/sap/opu/odata/sap/API_EXCHANGERATE_SRV",

params={

"$filter": (

f"FromCurrency eq '{from_currency}' "

f"and ToCurrency eq '{to_currency}'"

),

"$top": 1,

"$orderby": "ValidFrom desc"

},

headers={"Authorization": f"Bearer {token}", "Accept": "application/json"},

timeout=10

)

resp.raise_for_status()

rate = float(resp.json()["d"]["results"][0]["ExchangeRate"])

return round(amount * rate, 2)4.4 Sandbox Execution Environment

Generated code never executes in the agent's process. It runs in an isolated sandbox — a short-lived container with constrained resources, network policies, and filesystem isolation:

Sandbox Orchestrator

- Provisions ephemeral container (gVisor-sandboxed)

- Installs dependencies in isolated venv

- Injects auth proxy — skill calls get_token() → auth gateway

- Network policy: ALLOW auth gateway + target API endpoints; DENY everything else (no lateral movement, no internet access)

- Mounts skill code as read-only

- Executes with resource limits enforced via cgroups

- Captures: stdout, stderr, return value, execution time, exit code

- Container destroyed after execution — completely stateless

4.5 Debug Loop

When sandbox execution fails, the agent enters a debug loop. It has access to the full error context — traceback, HTTP response bodies, stderr output — and applies the same code-debug-retry cycle that coding agents use in software development:

The debug loop is bounded: a configurable maximum number of attempts (default: 5) prevents infinite iteration. If the agent cannot produce working code within the budget, it escalates to the Meta-Agent or a human operator, providing the full debug trace as context.

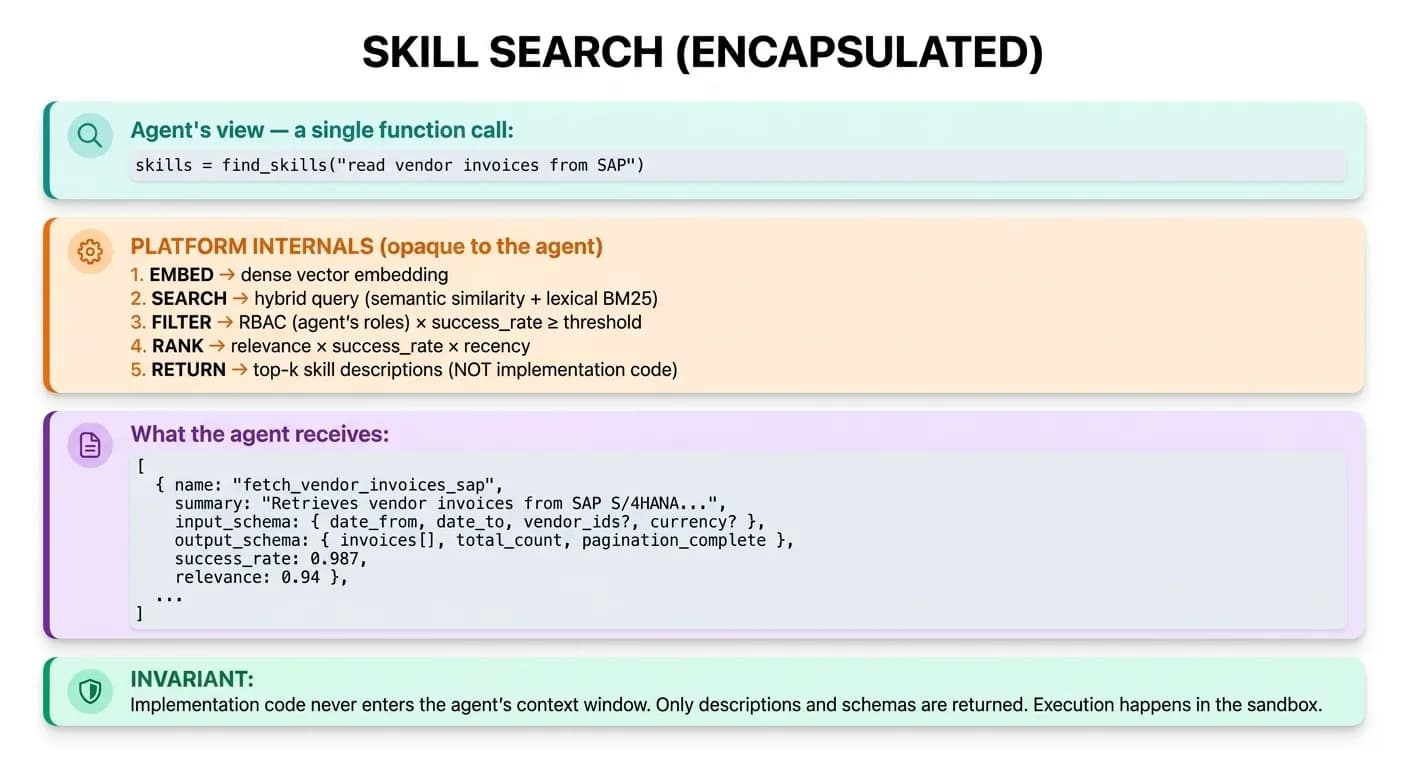

Skill Discovery: Semantic Search Architecture

Agents find skills through natural language search — not by scanning flat registries. Implementation code never enters the context window.

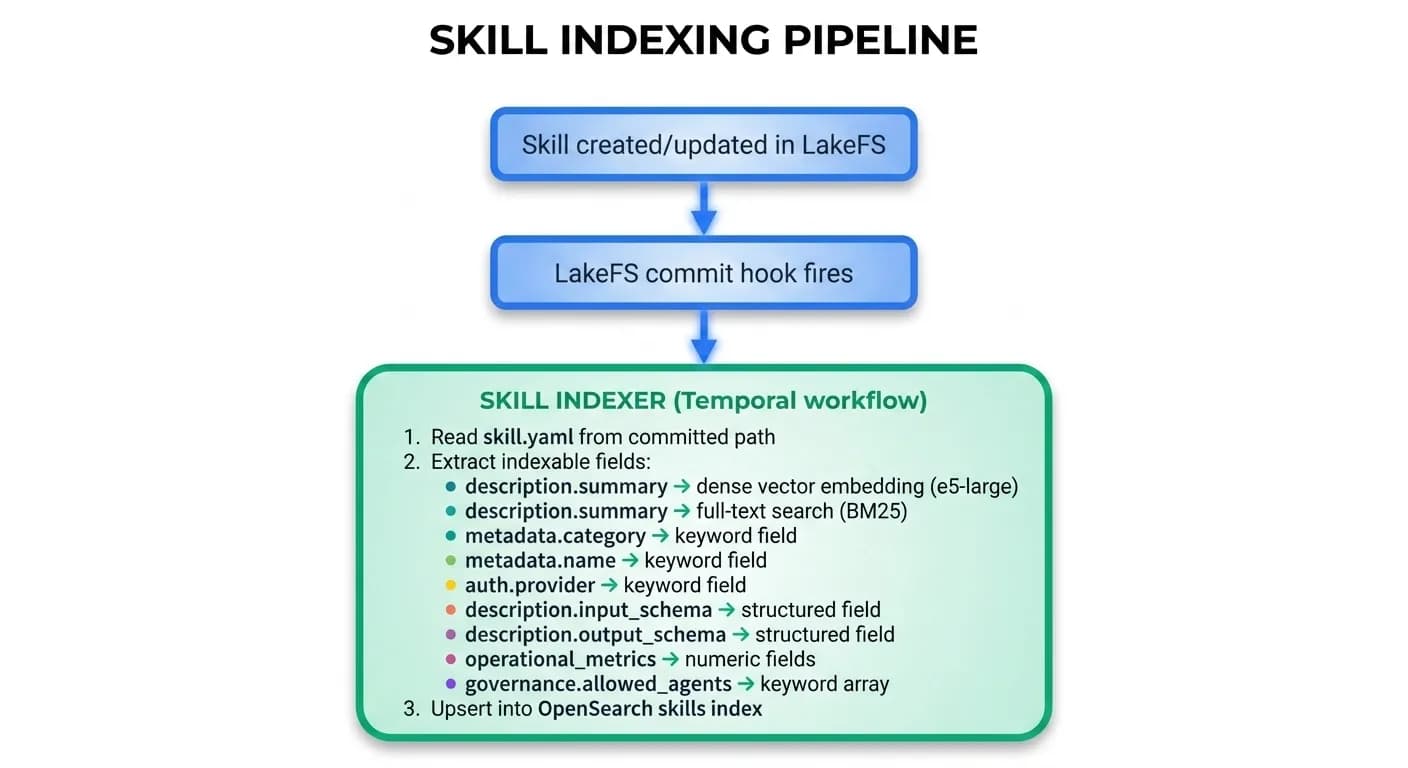

5.1 Indexing Pipeline

When a skill is created or updated, its metadata is indexed in OpenSearch for semantic retrieval. A LakeFS commit hook fires the indexing workflow automatically:

5.2 Search Model

Skill search is fully encapsulated inside the platform. Agents call a single function; the platform handles embedding, ranking, RBAC, and relevance scoring internally:

"Implementation code never enters the agent's context window. Only descriptions and schemas are returned. Execution happens in the sandbox."

5.3 Context Efficiency Comparison

The search architecture ensures minimal context window usage. For an agent handling 20 tasks per hour, each requiring 2–3 integrations, the difference compounds significantly:

| Step | MCP (Traditional) | Zenera Skills |

|---|---|---|

| Tool registry load | All tool schemas pre-loaded: 150K+ tokens | Nothing pre-loaded: 0 tokens |

| Task arrives | Model scans all tools in context: O(n) | Semantic search (external): O(1) |

| Match found | Already in context (wastefully) | Load matched skill description: ~200–500 tokens |

| Execution | Model generates JSON tool call inline | Skill code executes in sandbox (0 context tokens) |

| Total context cost per integration call | 150K+ (amortized) | 200–500 tokens |

150K+ tokens

MCP baseline

constant overhead

~20K tokens

Zenera per hour

for 20 tasks × 2.5 integrations

~87%

Savings

reduction in integration-related context

Skill Versioning and Lifecycle

Skills are versioned objects in LakeFS. Every mutation — from self-coding agents, Meta-Agent improvements, or human edits — creates a new version on a branch, merged atomically.

6.1 Version Management

Each generation or debug cycle creates a new branch. On success, the branch merges atomically into main. On failure, the branch is discarded — main is never in a partial state:

Atomic Guarantees

- Each version is a complete, self-consistent skill

- Merges to main are atomic — no partial updates

- Any version can be rolled back to instantly

- Concurrent agent edits use optimistic concurrency (conflict → retry)

6.2 Forking

When an agent needs a variation of an existing skill — same target system but a different operation — it forks the skill rather than modifying it:

# Fork relationship

fetch_vendor_invoices_sap (v3) # Original

└── fork → update_vendor_payment_status_sap (v1) # Forked skill

fork_of: fetch_vendor_invoices_sap

# Inherits: auth config, base_url, OData v4 patterns

# New: write operation instead of read, different endpointForks preserve the lineage graph. The platform can trace which skills derive from which, identify common patterns, and suggest deduplication when forks diverge minimally.

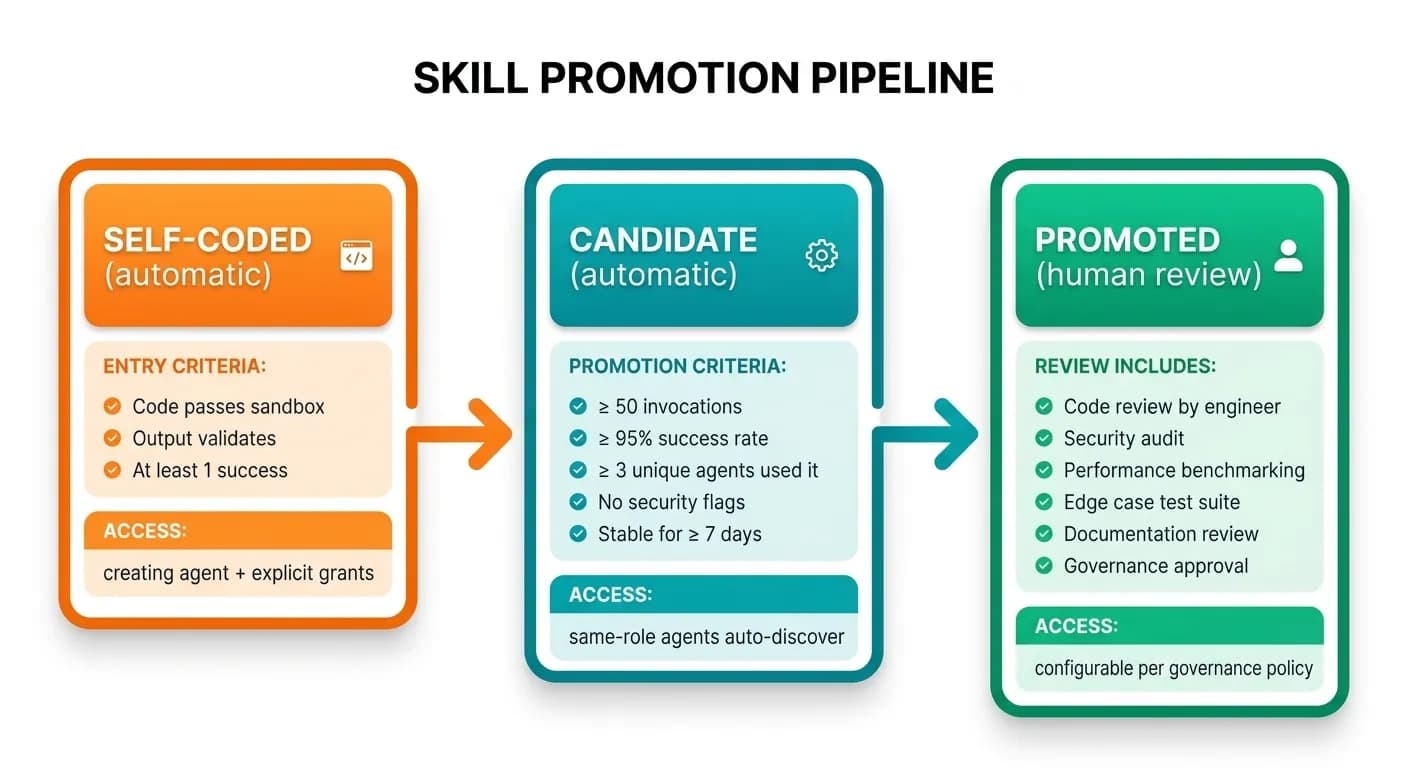

6.3 Promotion Pipeline

Skills that accumulate sufficient operational history can be promoted from self-coded to production-grade. Each stage has explicit entry criteria, access controls, and review requirements:

Self-Coded

Automatic- Code passes sandbox

- Output validates

- At least 1 success

Access: Creating agent + explicit grants

Candidate

Automatic- ≥ 50 invocations

- ≥ 95% success rate

- ≥ 3 unique agents used it

- No security flags

- Stable for ≥ 7 days

Access: Same-role agents auto-discover

Promoted

Human Review- Code review by engineer

- Security audit

- Performance benchmarking

- Edge case test suite

- Documentation review

- Governance approval

Access: Configurable per governance policy

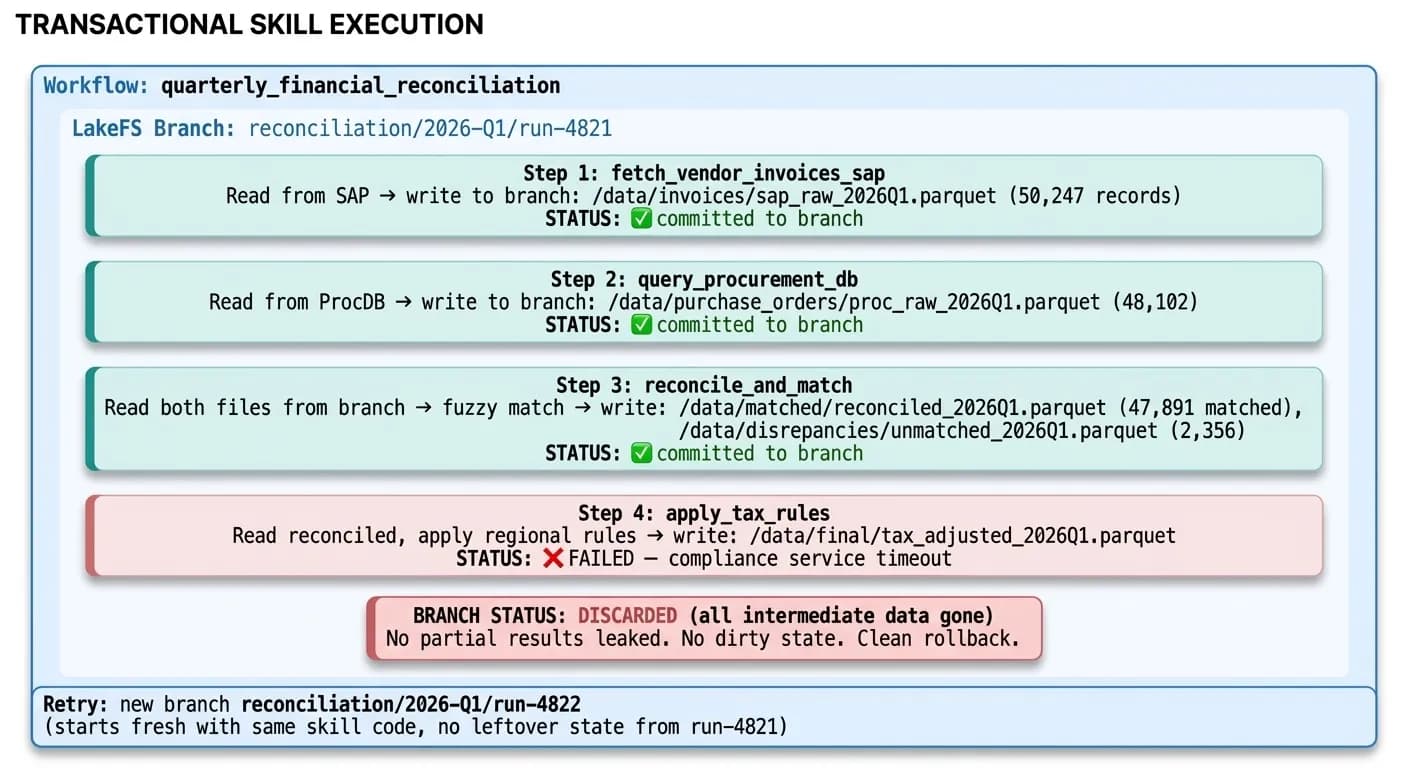

Transactional Integration With LakeFS

Skills can participate in LakeFS transactions. Intermediate writes land on an isolated branch. If any downstream step fails, the branch is discarded — no partial state corruption, no dirty data.

7.1 Skills as Transactional Participants

The example below shows a four-step quarterly reconciliation workflow. Steps 1–3 succeed and commit to the branch. Step 4 fails. The entire branch is discarded — no partial results leak, no dirty state persists. The next retry starts completely clean:

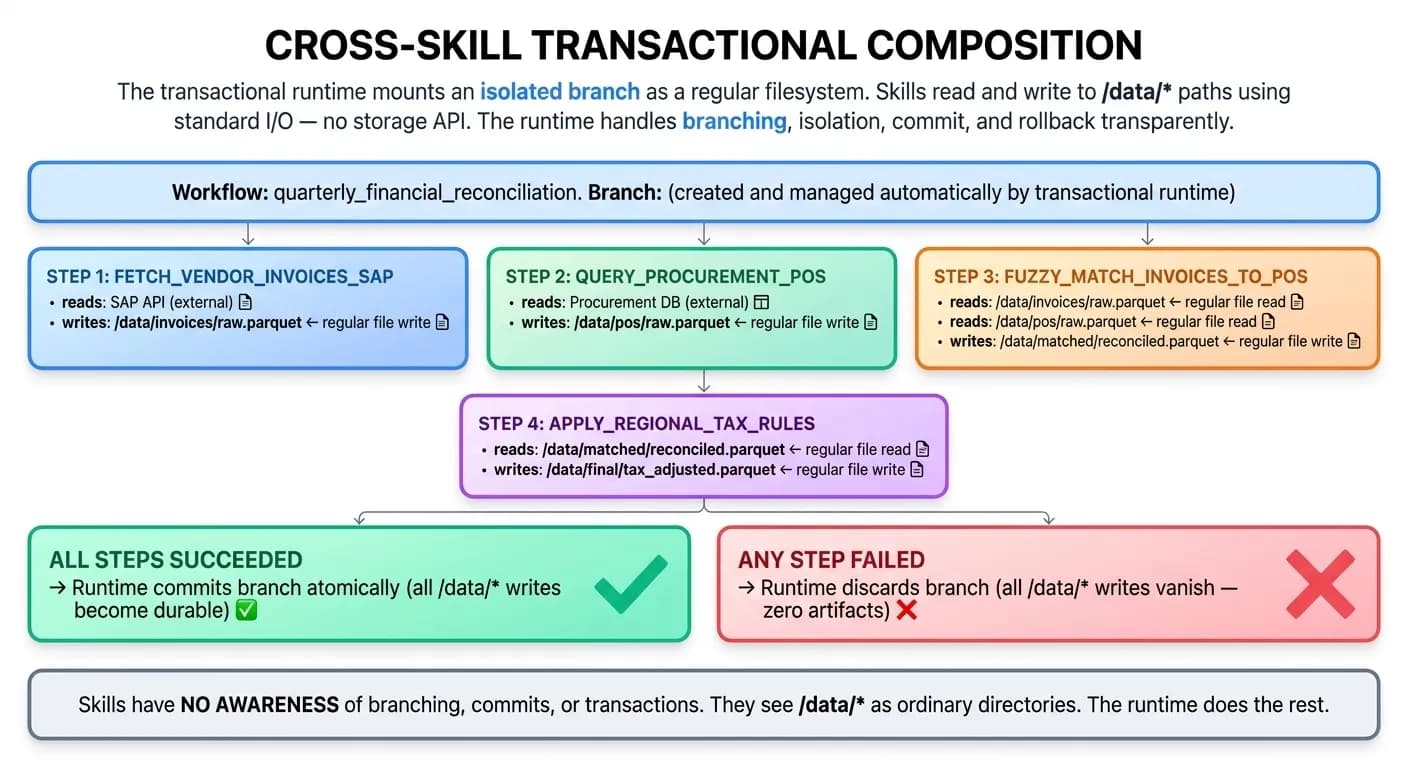

7.2 Cross-Skill Transactional Composition

Skills within a workflow share an isolated storage branch. Each skill reads from the previous skill's output and writes its own results — all to standard /data/* paths. Skills have no awareness of branches, commits, or transactions — they see ordinary directories:

"Skills have no awareness of branching, commits, or transactions. They see /data/* as ordinary directories. The runtime does the rest."

Meta-Agent Skill Generation

The Meta-Agent generates skills proactively during agent system design — before agents are deployed to production. This is the design-time complement to the runtime self-coding pipeline.

8.1 Design-Time Skill Synthesis

The Meta-Agent pipeline runs five phases — requirement extraction, API discovery, code generation, automated testing, and commit to the skill library:

8.2 Precision Over Breadth

The Meta-Agent extracts only the operations the agent system actually needs. An MCP server mirrors the entire API surface. The numbers for a SAP integration illustrate the gap:

| Metric | MCP Server (SAP) | Meta-Agent Skills |

|---|---|---|

| Operations exposed | 312 (full API surface) | 4 (task-specific) |

| Tokens in context | ~18,000 (tool descriptions) | ~600 (4 skill descriptions) |

| Maintenance surface | 312 tool definitions to keep in sync | 4 skill implementations |

| Test coverage | Generic (if any) | Task-specific, with real data fixtures |

| Time to build | 80–200 hours (estimated for SAP MCP server) | 10–30 minutes (Meta-Agent automated) |

Security Model

Self-coded skills introduce unique security considerations. The architecture addresses them through five independent layers — from code generation constraints to governance and audit.

9.1 Defense in Depth

Layer 1

Code Generation Constraints

- Allowed imports whitelist (requests, json, csv, pandas, etc.)

- Forbidden patterns (os.system, subprocess, eval, exec, __import__)

- Required patterns (must use zenera.auth for credentials)

- Static analysis scan before sandbox execution

- AST-level validation: no code execution outside function boundaries

Layer 2

Sandbox Isolation

- gVisor-sandboxed container (not just Docker — kernel-level isolation)

- No root access, read-only filesystem (except /tmp)

- CPU, memory, and time limits enforced via cgroups

- Network egress restricted to auth gateway, target API endpoints, and platform storage only

- No access to other containers, host network, or K8s API

Layer 3

Auth Gateway RBAC

- Agent must be authorized for the requested auth provider

- Tokens are scoped to minimum necessary permissions

- Rate limits per agent per provider

- All token grants written to immutable audit log

Layer 4

Output Validation

- Skill output validated against declared output_schema

- Data classification check: skill cannot return restricted data to an agent without matching clearance

- Output size limits enforced

Layer 5

Governance and Audit

- Every skill creation logged with: agent ID, timestamp, source docs, generated code hash, test results

- Skills can be flagged for human review (auto-flag on new auth provider access, writes to production systems, high-risk operations)

- Promoted skills require explicit human approval

- All skill invocations logged with: run_id, agent_id, params (redacted), result status, execution time, resource usage

9.2 Comparison to MCP Security

| Threat | MCP Exposure | Skills Mitigation |

|---|---|---|

| Tool poisoning | Malicious instructions in tool descriptions exploit LLM sycophancy | Skill descriptions written by the agent or Meta-Agent, not untrusted third parties; stored in governed LakeFS |

| Supply chain attacks | Unvetted third-party MCP servers with hidden backdoors | Skills generated from raw API docs, not third-party packages; all code is visible and auditable |

| Credential exposure | MCP servers may log or leak credentials passed through them | Auth gateway never exposes raw credentials to skill code; bearer tokens are short-lived and scoped |

| Lateral movement | Compromised MCP server can access other tools via shared context | Sandbox network policy restricts egress to declared target only; no lateral movement possible |

| Context contamination | Malicious content in MCP responses persists across sessions | Skill output validated against schema; content doesn't enter LLM context (only structured result) |

Composite Skills: Multi-System Orchestration

For workflows that span multiple external systems, Zenera supports composite skills — skills whose implementation calls other skills, expressed as DAGs with parallelism and transactional guarantees.

10.1 Composite Skill Architecture

A composite skill declares its dependencies and expresses a step DAG in pipeline.yaml. Steps with parallel_with run concurrently. Steps with depends_on wait for predecessors. The entire pipeline runs within a transactional branch — if any step fails, the branch is discarded:

skill.yaml

# skill.yaml for a composite skill

apiVersion: zenera.ai/v1

kind: Skill

metadata:

name: quarterly_financial_reconciliation

category: finance/reconciliation

version: 2

origin: meta-agent

description:

summary: >

End-to-end quarterly financial reconciliation across SAP and procurement

systems. Fetches invoices and POs, performs fuzzy matching, applies tax

rules, generates discrepancy report, and notifies finance team.

implementation:

language: composite

entrypoint: pipeline.yaml

dependencies:

- fetch_vendor_invoices_sap

- query_procurement_pos

- fuzzy_match_invoices_to_pos

- apply_regional_tax_rules

- generate_discrepancy_report

- post_to_slack_channelpipeline.yaml

# pipeline.yaml — composite skill definition

steps:

- name: fetch_invoices

skill: fetch_vendor_invoices_sap

params:

date_from: "{{ params.quarter_start }}"

date_to: "{{ params.quarter_end }}"

output_path: "data/invoices/raw.parquet"

- name: fetch_pos

skill: query_procurement_pos

params:

date_range: ["{{ params.quarter_start }}", "{{ params.quarter_end }}"]

output_path: "data/pos/raw.parquet"

parallel_with: fetch_invoices # runs concurrently

- name: match

skill: fuzzy_match_invoices_to_pos

depends_on: [fetch_invoices, fetch_pos]

input_paths:

- "data/invoices/raw.parquet"

- "data/pos/raw.parquet"

output_path: "data/matched/reconciled.parquet"

- name: tax

skill: apply_regional_tax_rules

depends_on: [match]

input_path: "data/matched/reconciled.parquet"

output_path: "data/final/tax_adjusted.parquet"

- name: report

skill: generate_discrepancy_report

depends_on: [tax]

input_path: "data/final/tax_adjusted.parquet"

output_path: "data/reports/Q1_2026_discrepancies.pdf"

- name: notify

skill: post_to_slack_channel

depends_on: [report]

params:

channel: "#finance-reconciliation"

message: "Q1 2026 reconciliation complete. {{ steps.match.output.unmatched_count }} discrepancies found."

attachment_path: "data/reports/Q1_2026_discrepancies.pdf"

transaction:

on_success: commit

on_failure: discardOperational Metrics and Observability

Every skill invocation emits structured OpenTelemetry telemetry. The platform aggregates this into dashboards covering skill health, self-coding activity, and integration coverage.

11.1 Skill-Level Telemetry

Each span captures identity, performance, resource usage, and result status — enough to detect degradation, attribute latency, and audit security events:

{

"trace_id": "abc123...",

"span_name": "skill.execute",

"attributes": {

"skill.name": "fetch_vendor_invoices_sap",

"skill.version": 3,

"skill.origin": "self-coded",

"skill.category": "erp/sap",

"agent.id": "agent/supply-chain-monitor",

"workflow.id": "reconciliation-Q1-2026",

"run.id": "run-4821",

"auth.provider": "sap-s4hana-prod",

"sandbox.cpu_seconds": 0.3,

"sandbox.peak_memory_mb": 128,

"sandbox.execution_time_ms": 1180,

"result.status": "success",

"result.record_count": 1247,

"result.pagination_pages": 13

}

}Success rates over time

Detect degradation before it impacts workflows

Latency distributions

Identify slow integrations per skill

Self-coding activity

New skills generated vs. reused

Debug loop statistics

Average attempts before success, common error patterns

11.2 Self-Coding Activity Dashboard

The dashboard surfaces three views: success rate distribution, self-coding activity over the past 30 days, and integration coverage across registered enterprise systems:

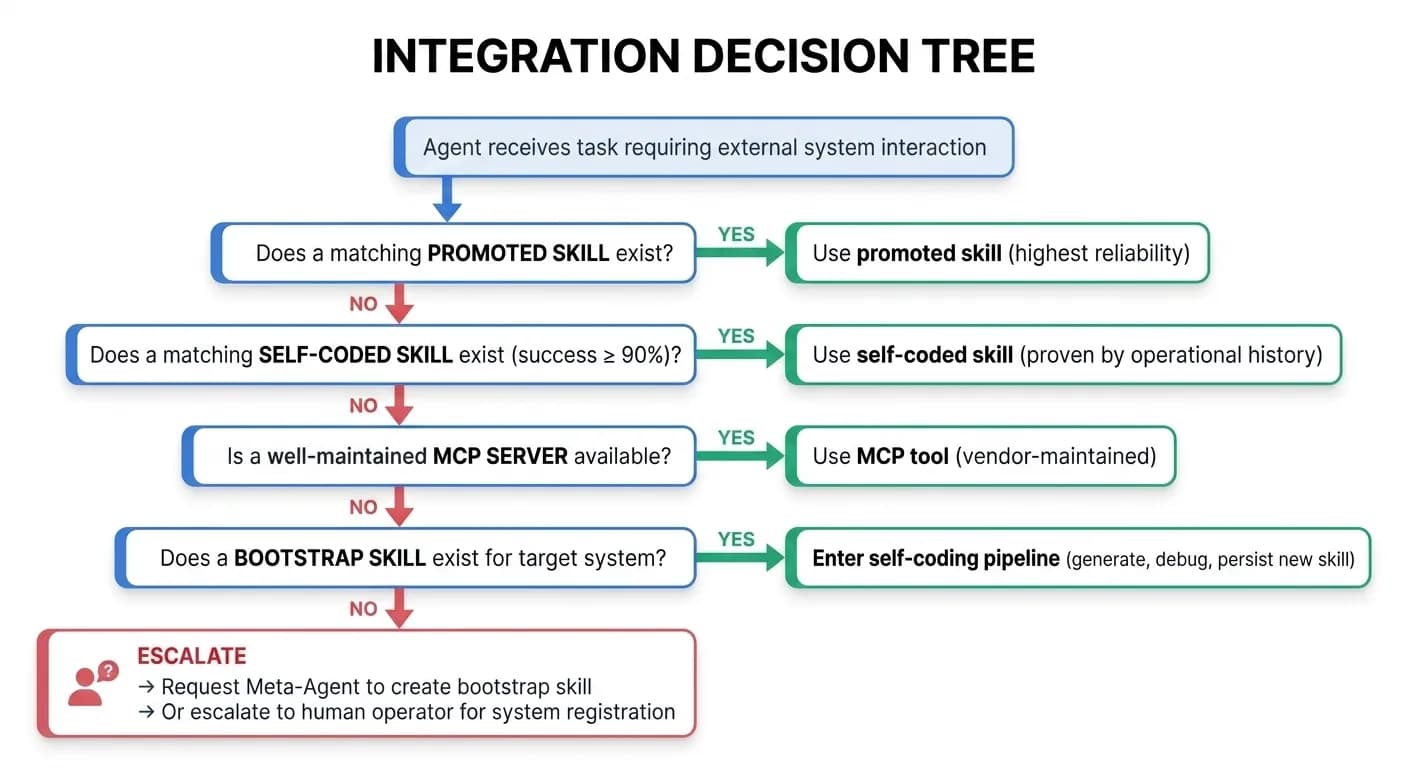

Integration Decision Matrix

The platform runtime automatically selects the integration approach based on availability and task requirements — promoted skills first, self-coding as the last resort before human escalation.

12.1 When to Use Each Approach

12.2 Complete Comparison Matrix

| Dimension | MCP Tools | Self-Coded Skills | Meta-Agent Skills | Composite Skills |

|---|---|---|---|---|

| Creation | Vendor or engineer builds MCP server | Agent generates at runtime | Meta-Agent generates at design time | Agent or Meta-Agent composes from existing skills |

| API coverage | Full API surface (hundreds of operations) | Task-specific (2–5 operations) | Design-specific (3–8 operations) | N/A — orchestrates other skills |

| Context cost | 5K–20K tokens per service | 200–500 tokens | 200–500 tokens | 300–800 tokens (pipeline description) |

| Build time | 60–650 hours (per MCP server) | 1–30 minutes (automated) | 10–60 minutes (automated) | Composed from existing skills |

| Maintenance | Manual (must track API changes) | Self-healing (agent re-generates) | Self-healing + design-time verification | Inherits from component skills |

| Testing | Varies (often minimal) | Auto-generated sandbox tests | Comprehensive auto-generated suite | End-to-end pipeline tests |

| Transactional | No (stateless tool calls) | Yes (LakeFS branch per workflow) | Yes | Yes — entire pipeline on one branch |

| Legacy systems | Cannot integrate | Full support | Full support | Full support |

Summary

The self-coded integration architecture transforms integration from a supply-chain dependency into an emergent capability of the agent system itself.

Skills as versioned, governed artifacts

stored in transactional storage with full provenance and RBAC

Authentication abstracted

through a managed gateway — skills never handle credentials

Sandboxed execution

with defense-in-depth security: static analysis, gVisor isolation, network policy, output validation

Semantic discovery

replacing enumerative tool loading — O(1) context cost instead of O(n)

Self-healing integrations

that detect API changes and regenerate code without human intervention

Transactional composition

via LakeFS branches — multi-skill pipelines with atomic commit/rollback

Meta-Agent design-time generation

complementing runtime self-coding for pre-tested, high-confidence skills

Organic library growth

where every agent task potentially expands integration coverage

"The enterprise integration problem was never a protocol problem. It was always an intelligence problem. Skills are the unit of integration for systems that think."

See Self-Coded Integrations in Action

Discover how Zenera agents write, debug, and evolve integration code at runtime — reaching every enterprise system without an MCP server.

Request a Demo