From Tokens to Intelligence

Why Enterprise AI Projects Fail — and What Actually Works

The Enterprise AI Adoption Gap

The technology exists. The models are capable. So why isn't it working?

The Current Enterprise AI Stack

Step 1: Acquire LLM Access

- Self-hosted inference: NVIDIA NIM, vLLM, TensorRT-LLM on on-premise GPUs

- Cloud APIs: OpenAI, Anthropic Claude, Google Gemini, Azure OpenAI

- Hybrid approaches: Route between local and cloud based on sensitivity and cost

Step 2: Build Custom Solutions with Open-Source Tooling

- LangChain / LlamaIndex for orchestration

- Vector databases (Pinecone, Weaviate, Chroma) for retrieval

- Custom Python glue code connecting components

- Prompt engineering to coerce desired behavior

Step 3: Deploy RAG as the Primary Pattern

- Ingest enterprise documents into vector store

- Retrieve relevant chunks at query time

- Augment LLM context with retrieved content

- Hope the model synthesizes a useful response

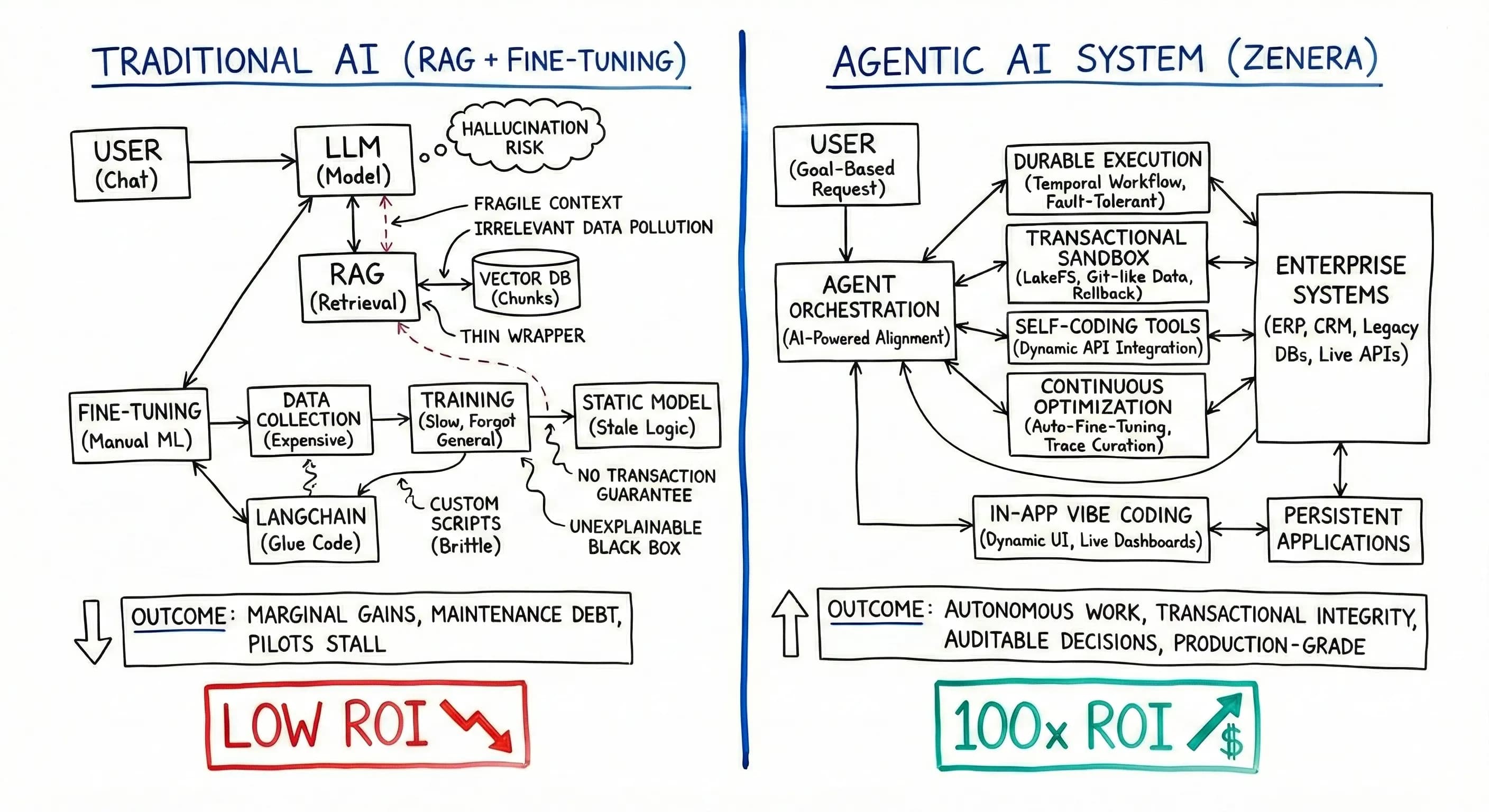

Why This Approach Systematically Fails

RAG Delivers Marginal Value

RAG is fundamentally a thin wrapper over foundation models. It addresses context window limitations but does not fundamentally change what the model can do.

| Promise | Reality |

|---|---|

| "Ground responses in enterprise data" | Retrieval quality is fragile; irrelevant chunks pollute context |

| "Reduce hallucinations" | Hallucinations persist; now they're confidently attributed to documents |

| "Easy to implement" | Chunking, embedding selection, reranking, and prompt engineering require continuous tuning |

| "Works out of the box" | 60-70% accuracy is typical; unacceptable for mission-critical workflows |

RAG shifts the problem from "the model doesn't know" to "the model misunderstands what it retrieved." The failure mode changes; it doesn't disappear.

Fine-Tuning: The False Promise

When RAG accuracy disappoints, enterprises turn to fine-tuning:

- 1Data collection is expensive — High-quality instruction-response pairs from real workflows are scarce

- 2Synthetic data is low-quality — Generated examples lack the edge cases that matter

- 3Evaluation is undefined — Without clear metrics, "better" is subjective

- 4Catastrophic forgetting — Models lose general capabilities when overtrained on narrow tasks

- 5Continuous drift — Business logic changes; fine-tuned models become stale

- 6ML expertise required — Most enterprises lack the team to iterate effectively

Fine-tuning optimizes the model for yesterday's problems. Enterprise workflows evolve faster than fine-tuning cycles.

The Explainability Crisis

Even when RAG or fine-tuned models produce correct outputs, enterprises cannot explain why:

- Which documents influenced the response?

- What reasoning chain led to the conclusion?

- Would a slightly different query produce a different result?

- Can we audit this decision for compliance?

In regulated industries — finance, healthcare, manufacturing — unexplainable AI is non-deployable AI.

The Adoption Outcome

Low AI adoption in enterprise workflows is not a technology problem — it's an architecture problem.

Organizations have tokens. They don't have intelligence. The LangChain + RAG + fine-tuning stack produces:

- Demos that impress but don't survive contact with production

- Pilots that stall when accuracy requirements become real

- Technical debt that consumes engineering resources

- Negative ROI after accounting for infrastructure and team costs

The Agentic Breakthrough

Coding Agents Work

Claude Code, OpenAI Codex CLI, Gemini Code Assist, Cursor — these tools represent a step change in developer productivity:

- Agents autonomously navigate codebases

- They read, reason, edit, test, and iterate

- Complex multi-file refactors happen in minutes

- Developers report 2-10x productivity gains

This is not RAG. This is agency — models that take actions, observe outcomes, and adapt.

| Factor | Coding Agents | Enterprise AI |

|---|---|---|

| Model training | End-to-end RL on code tasks; tool use is native | Generic instruction tuning; tool use is bolted on |

| Tool surface | Minimal: file read/write, search, terminal | Vast: thousands of APIs across decades of systems |

| Execution env | Local filesystem; immediate feedback | Distributed systems; latency, failures, permissions |

| Feedback loop | Tests pass/fail; syntax errors are unambiguous | Business correctness is nuanced and delayed |

| Sandboxing | Local machine; low blast radius | Production systems; high stakes |

The Zenera Approach: Infrastructure for Enterprise Agency

The Core Insight

Coding agents succeed because they have:

- Agents autonomously navigate codebases

- They read, reason, edit, test, and iterate

- Complex multi-file refactors happen in minutes

- Developers report 2-10x productivity gains

This is not RAG. This is agency — models that take actions, observe outcomes, and adapt.

Zenera provides the enterprise equivalent:

In-App Vibe Coding: Applications, Not Chatbots

Zenera agents don't just respond — they build applications. When a user describes a recurring business need, Zenera synthesizes a fully functional, interactive application that connects to live enterprise data sources, updates in real-time, provides filtering, visualization, and alerting, and can be saved, shared, and reused.

Example: Inventory Stock-Out Forecasting

| Chatbot Approach | Zenera Approach |

|---|---|

| User asks: "Which SKUs will stock out next week?" | User asks: "Build me a stock-out forecasting tool" |

| AI returns a text list based on current snapshot | AI generates a live dashboard application |

| Tomorrow, the answer is stale | Application pulls real-time inventory data via synthesized integrations |

| User must ask again for updated forecast | Forecasts update automatically as supply chain data flows in |

| No filtering — user gets all SKUs or must re-query | Interactive filters: by warehouse, category, supplier, lead time |

| No visualization — raw text output | Time-series plots showing projected depletion curves per SKU |

| No alerts — user must remember to check | Configurable threshold alerts pushed to Slack, email, or mobile |

| Context lost after session ends | Application persists; user returns daily to the same tool |

| Cannot share with procurement team | Shareable link with role-based access controls |

The Compounding Value

Every Zenera interaction potentially creates a reusable organizational asset:

- A supply chain analyst builds a stock-out forecaster — entire procurement team uses it

- A finance manager builds a variance analyzer — becomes standard month-end workflow

- An operations lead builds a capacity planner — production scheduling adopts it

Chatbots answer questions once. Zenera builds tools that answer questions forever.

The ROI Equation

RAG + LangChain

Infrastructure

Vector DB, inference, custom code

High

Engineering

Prompt tuning, integration maintenance

Ongoing

Accuracy

60-70% on well-defined queries

Marginal

Scope

Document Q&A; limited action capability

Narrow

Net ROI: Low or Negative

Zenera Agentic Platform

Infrastructure

Managed platform deployment

Predictable

Engineering

Agent definition and goal specification

Focused

Accuracy

Agent-verified, auditable outcomes

Production-grade

Scope

Full workflow automation across enterprise systems

Broad

Net ROI: 100x RAG Implementations

What Enterprises Actually Need

| Requirement | LangChain + RAG | Zenera |

|---|---|---|

| Transactional data operations | Not addressed | LakeFS-backed versioning |

| Fault-tolerant workflows | Fail on restart | Temporal durable execution |

| Arbitrary system integration | Pre-built tools only | Self-coding agents |

| Agent coordination | Manual prompt engineering | AI-powered alignment |

| Model optimization | Separate ML pipeline | Integrated fine-tuning |

| Multimodal knowledge access | Text vectors only | Hybrid RAG with vision |

| Explainability | Black box | Full decision tracing |

| Dynamic UI generation | Chat only | Automatic interfaces |

Conclusion

The enterprise AI gap is not about model capability. GPT-4, Claude, Gemini — these models are extraordinarily capable.

The gap is about infrastructure:

- RAG is retrieval, not agency

- LangChain is primitives, not production

- Fine-tuning is optimization, not architecture

Coding agents prove that agency at scale is possible. Zenera brings that capability to enterprise workflows — with the transactional guarantees, fault tolerance, and alignment verification that production systems require.

The choice is not between "AI" and "no AI." The choice is between:

- Thin wrappers that deliver demos and disappointment

- Agentic infrastructure that delivers automation and ROI

Enterprises have been accumulating tokens. Zenera converts them into intelligence.

Ready to Move Beyond Tokens?

See how Zenera converts your AI investment into real enterprise intelligence.

Request a Demo