Zenera Go-To-Market Strategy

Bridging the Enterprise AI Adoption Chasm with an AI-Powered Agent Factory

March 2026

Executive Summary

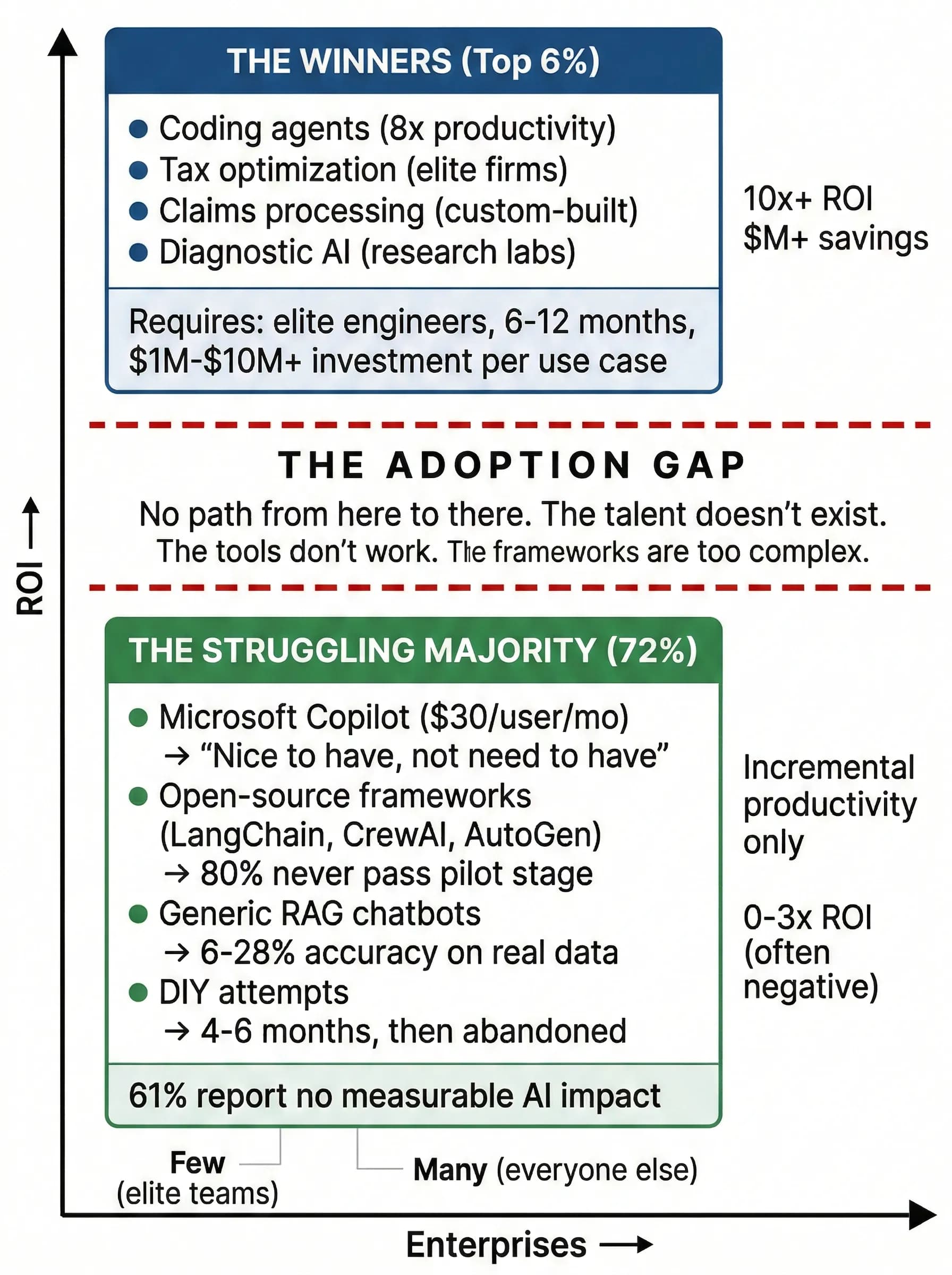

The enterprise AI market is experiencing a profound paradox. Large Language Models have achieved extraordinary capability — they write production-grade code, reason through complex medical diagnoses, and navigate multi-step financial analyses with superhuman accuracy. AI coding agents, fine-tuned to the isolated domain of software engineering, routinely exceed expectations and deliver 8x productivity multipliers. Yet the vast majority of enterprises — approximately 72% — are breaking even or losing money on their AI investments. Nearly 80% of enterprise AI projects never progress beyond the pilot stage.

This is not a technology problem. It is a deployment problem.

The $199 billion agentic AI market projected for 2034 is being gated by a structural adoption chasm. On one side: extraordinarily powerful models. On the other: enterprises trapped between tools that are too generic to solve real problems and frameworks that are too complex for anyone but elite engineering teams to operationalize.

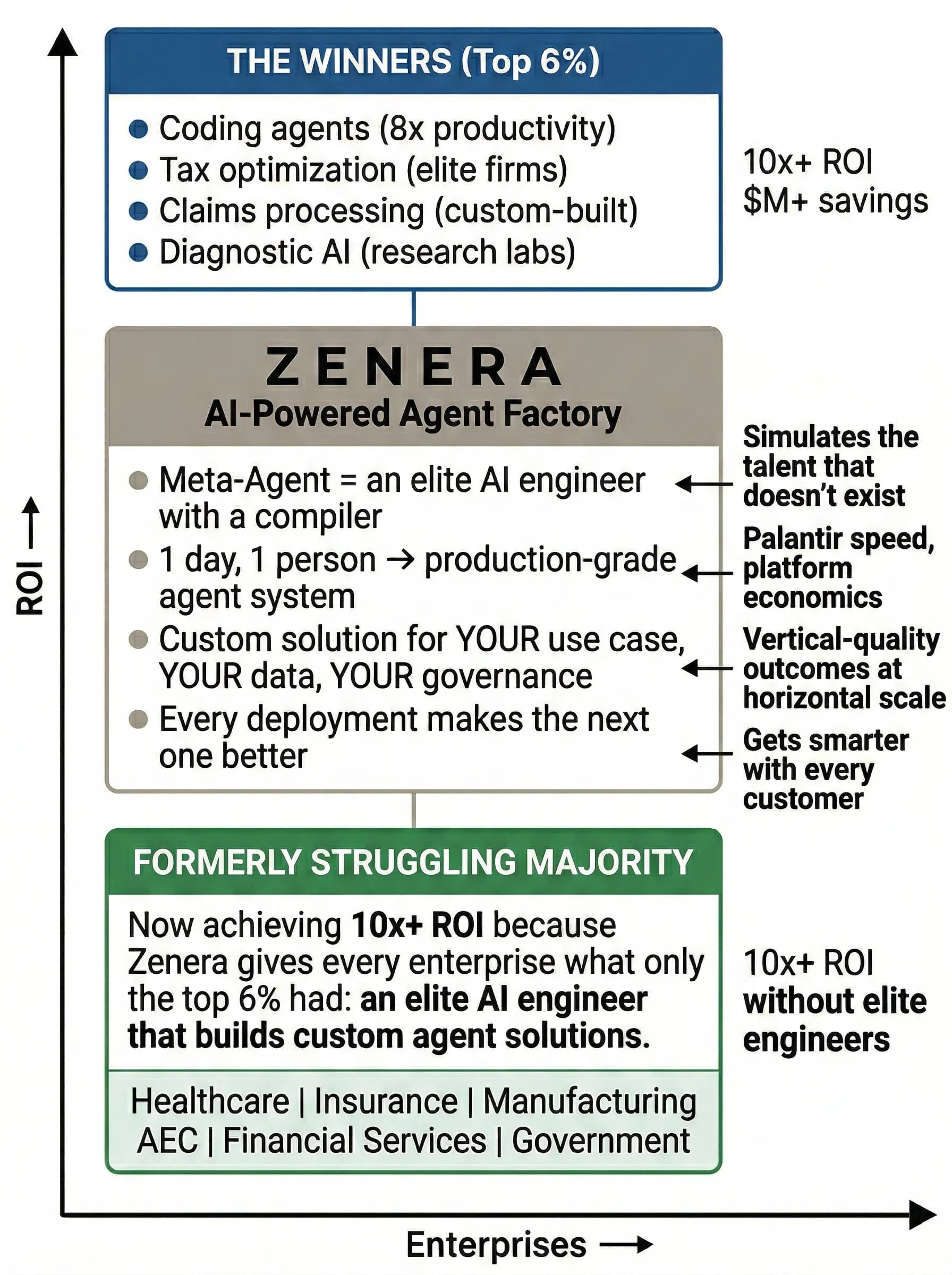

Zenera exists to bridge this chasm. By studying the market's clearest success stories — most notably Palantir's $7.2 billion revenue trajectory built on a “show, don't tell” deployment model — and combining that business strategy with the breakthrough capabilities of generative AI, Zenera has built an Enterprise AI Agent Factory: a platform where AI builds the AI. One engineer can design, generate, deploy, and operationalize a production-grade multi-agent system in a single day.

This white paper presents the market analysis, competitive dynamics, product thesis, target customer profile, and business model that define Zenera's go-to-market strategy.

The State of Enterprise AI in 2026

The Market Landscape

The global agentic AI market is valued at USD 7.55 billion in 2025 and is projected to expand at a CAGR of 43.84%, reaching USD 199 billion by 2034. AI agents are becoming a foundational infrastructure primitive — comparable in significance to the advent of cloud computing or the relational database.

Yet adoption reality tells a different story:

| Metric | High Performers (Top 6%) | Average (33%) | Struggling (61%) |

|---|---|---|---|

| Enterprise EBIT Impact | 5%+ | Moderate/Fragmented | Negligible to Zero |

| Value Realization Multiplier | 10.3x ROI | 3.7x ROI | 0x ROI |

| Project Longevity | 3+ Years | 1–2 Years | < 1 Year (Abandoned) |

| Average Cost Savings | 15.2% | 5.5% | < 2% |

| Average Productivity Gain | 22.6% | 8.2% | < 3% |

The data reveals a winner-take-most dynamic: the top 6% of organizations are compounding their advantages through deep process redesign, while the bottom 61% are stuck in “pilot purgatory” — running expensive experiments that never reach production. Gartner predicts that at least 30% of generative AI projects will be abandoned after proof-of-concept by end of 2025 due to poor data quality, inadequate risk controls, escalating costs, and the lack of clear business value alignment.

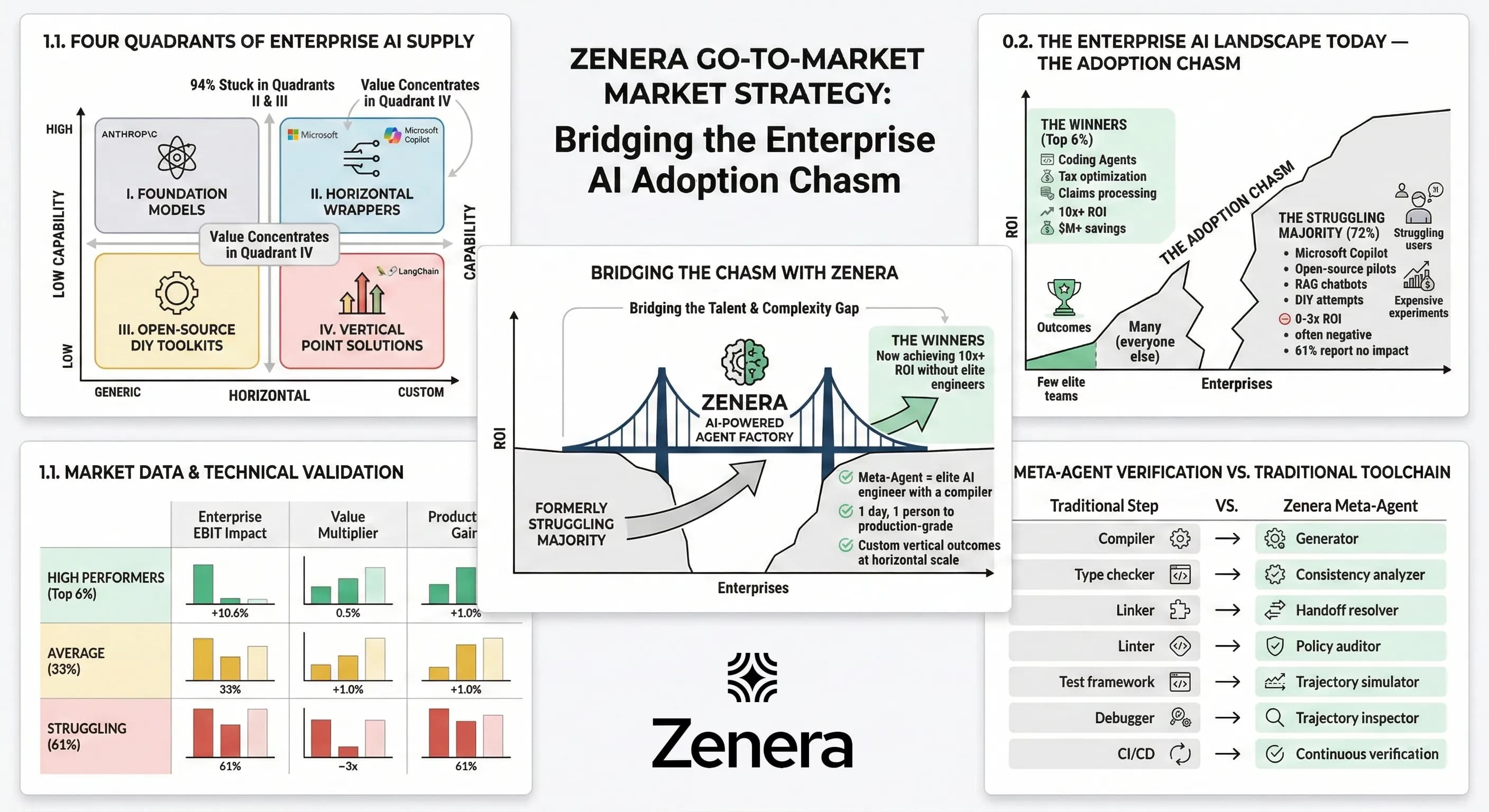

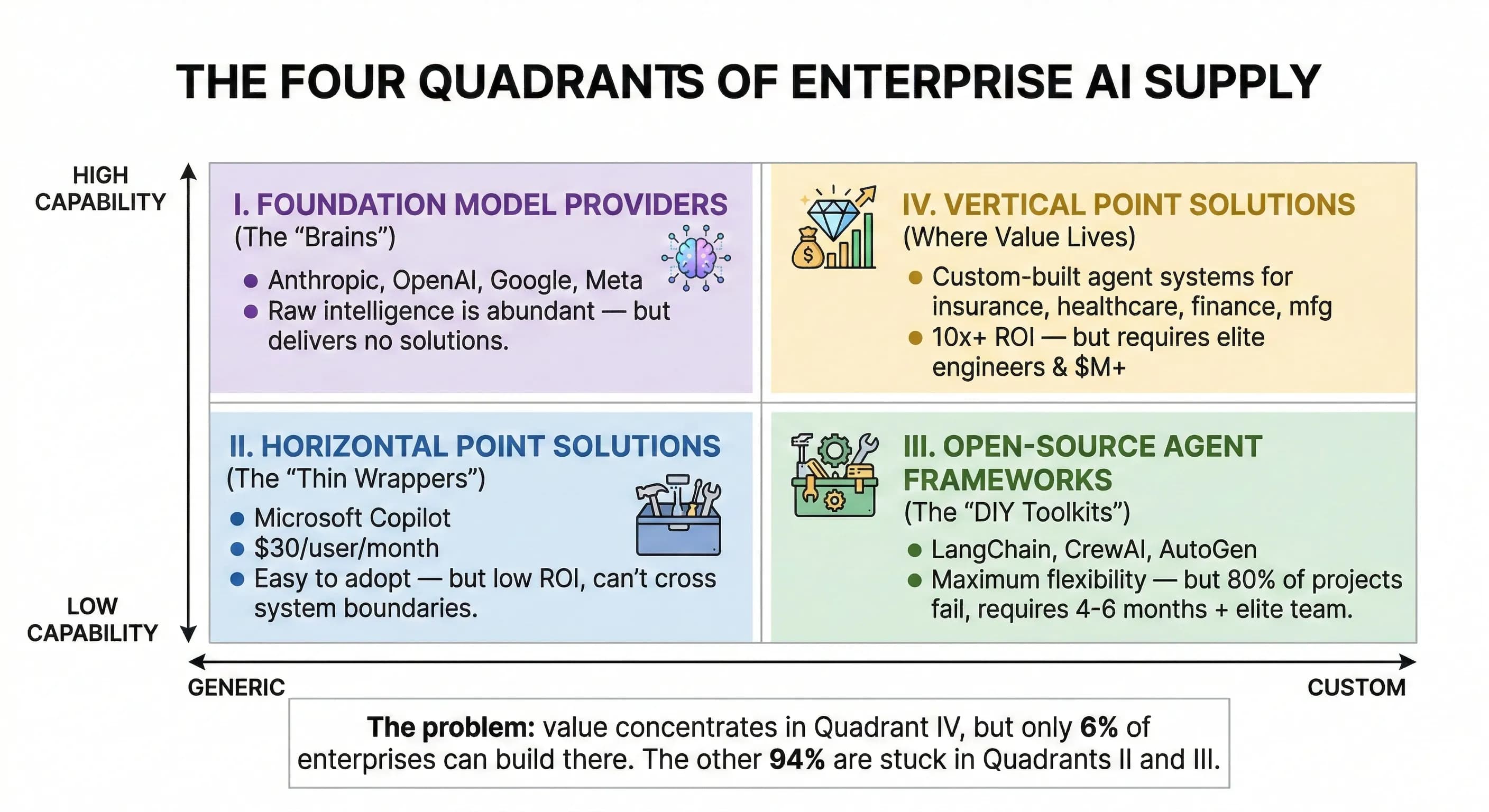

The Four Quadrants of Enterprise AI Supply

The enterprise AI supply landscape has consolidated into four distinct categories, each with structural strengths and fatal limitations:

Quadrant I: Foundation Model Providers (The “Brains”)

Anthropic (32% market share, 40% enterprise spend), OpenAI (25%), and Google (20%) have commoditized the intelligence layer. Models are extraordinarily capable — Claude reasons through regulatory documents, GPT writes production code, Gemini processes 2-million-token contexts. A price war has driven GPT-5 input costs to $1.25 per million tokens, making raw intelligence effectively abundant.

The limitation: Foundation model providers sell capability, not outcomes. They offer APIs. Enterprises need solutions. An API that can theoretically reason through a healthcare claims audit cannot, by itself, connect to the EHR, join data from the ERP, parse 100 payer contracts, produce audit-ready evidence, and deliver results personalized to a CFO versus a clinical analyst. The gap between “the model can do this” and “the enterprise has done this” is where all the value — and all the failure — lives.

Quadrant II: Horizontal Point Solutions (The “Thin Wrappers”)

Microsoft Copilot is the canonical example: a thin, hardcoded orchestration layer wrapping OpenAI's GPT models with Microsoft-defined behaviors, deployed across the Microsoft 365 ecosystem.

What Copilot does well: Seamless integration with email, calendar, Teams, and Office documents. Low barrier to adoption for the 400+ million Microsoft 365 users. Built-in responsible AI controls.

What Copilot cannot do:

| Limitation | Enterprise Impact |

|---|---|

| No custom orchestration | Cannot define multi-step workflows or agent coordination |

| No external system integration | Limited to Microsoft ecosystem; no arbitrary API access |

| No self-coding capability | Cannot synthesize integrations to legacy or proprietary systems |

| No transactional operations | Cannot perform atomic multi-system updates |

| No durable workflows | Cannot run long-running processes that survive failures |

| No model flexibility | Locked to OpenAI; cannot use Anthropic, Google, or local models |

| No domain depth | Generic prompts cannot encode healthcare billing rules or insurance regulatory logic |

Copilot excels at the productivity layer — drafting emails, summarizing meetings, generating slide decks. These are valuable but low-ROI activities that do not transform core business operations. When an enterprise tries to use Copilot for complex analytical questions — “Why is our MSK value-based contract underperforming by $3.2M?” — the system fails because the answer requires multi-system data joins, contract PDF parsing, clinical pathway analysis, and persona-specific presentation that Copilot's architecture fundamentally cannot support.

The result: enterprises report that Copilot is “nice to have” but not “need to have.” The $30/user/month cost is difficult to justify when the productivity gains are incremental rather than transformational. Microsoft's own research indicates that enterprise ROI expectations for Copilot are being systematically missed across regulated industries.

Quadrant III: Open-Source Agent Frameworks (The “DIY Toolkits”)

LangChain, CrewAI, Microsoft AutoGen, and dozens of open-source agent frameworks have democratized the ability to build multi-agent systems. These tools offer maximum flexibility: define agents with granular roles, wire tool integrations, create complex orchestration graphs.

The promise: Any developer can build an agent system. The leading frameworks have raised hundreds of millions in venture capital. Community momentum is enormous.

The reality: Open-source agent frameworks fail catastrophically in enterprise production. The reasons are structural, not incidental:

| Failure Mode | Description |

|---|---|

| Engineering complexity | A typical enterprise pilot requires 4–6 months and a specialized team (software architect, data engineer, AI/prompt engineer, MLOps, compliance). Only 5% of the work is API calls; 95% is data engineering, governance, and workflow integration. |

| No enterprise scaffolding | Frameworks lack built-in security, compliance guardrails, audit logging, RBAC, and explainability. Bolting these on after the fact is a 6–12 month engineering project. |

| Infinite failure surface | Agent systems defined in natural language have no compiler, no type checker, no linker. A prompt contradiction, a handoff loop, or a tool-instruction mismatch does not produce an error — it produces a wrong answer that looks right. |

| Integration dead-end | MCP (Model Context Protocol) has standardized agent-tool communication for modern APIs, but 80% of enterprise systems — legacy ERPs, mainframe terminals, proprietary protocols — have no MCP server and never will. |

| Context poisoning | Naive RAG implementations reduce organizational knowledge into isolated vectors, breaking logical flow. Generic text-to-SQL achieves 6%–28% accuracy on real-world medical datasets. |

| Runaway costs | Without guardrails, agent systems enter recursive loops. One documented case showed AutoGPT charging $14.40 for a simple recipe search due to inability to cache or reuse knowledge. |

The fundamental issue: open-source tools solve the last mile (agent orchestration) while ignoring the first ninety miles (data integration, governance, deployment, monitoring, domain knowledge). An enterprise that hires five engineers to build an agent system with LangChain will spend 6 months getting a demo to work — and then discover it cannot survive a Kubernetes node restart, cannot pass a SOC 2 audit, and produces confidently wrong answers 40% of the time.

Despite raising billions in aggregate funding, the open-source agent ecosystem has yet to produce a single complete enterprise solution outside of trivial RAG chatbots.

Quadrant IV: Verticalized Point Solutions (Where Value Concentrates)

The enterprises achieving 10x+ ROI on AI are not using generic tools. They are deploying highly customized, domain-specific agent systems:

- Insurance: Multi-agent claims processing with 7 specialized agents achieved 80% reduction in processing time

- FinTech: Specialized financial learning models achieved 200%+ annualized returns with 65–75% win rates

- Healthcare: Autonomous diagnostic assistants achieved 99.5% accuracy in malignant tissue identification

- IT Operations: Agentic foundations eliminated 90% of manual rework and cleared thousands of backlog records in weeks

The pattern is clear: Success happens at the vertical, not the horizontal.

High-ROI use cases are deeply integrated into specific business processes, require multi-system data joins, demand domain expertise, and need governance appropriate to the industry.

The problem: Building these vertical solutions requires the most expensive, hardest-to-find talent in the market. A healthcare analytics agent system that achieves 95–99% accuracy demands deep clinical domain knowledge, multi-system integration engineering, regulatory compliance expertise, and agentic AI skills — a combination that effectively does not exist outside of elite consulting firms and Big Tech labs.

The Adoption Chasm: Why the Gap Exists

The market data tells a stark story. A tiny minority of enterprises achieve extraordinary returns from AI — but only because they have access to elite engineering talent or can afford Palantir-scale engagements. Everyone else is stuck.

Why the gap exists:

The winners succeed because they have something the majority does not — elite agentic AI engineers who can design custom multi-agent systems, integrate with messy enterprise data, build governance and compliance, and deploy to production. These engineers are the scarcest resource in the AI economy. There are perhaps a few thousand in the world. The 100,000+ enterprises on the bottom of this chart will never hire them.

Every existing option fails to bridge this gap:

- Foundation models (Anthropic, OpenAI, Google) — enormously capable, but they sell raw intelligence, not solutions. An API cannot integrate with your EHR.

- Horizontal tools (Microsoft Copilot) — easy to adopt, but limited to low-complexity tasks. Cannot cross system boundaries or encode domain logic.

- Open-source frameworks (LangChain, CrewAI) — maximum flexibility, but require the elite engineers that enterprises don't have. 95% of the work is integration, governance, and verification — not API calls.

- Palantir — proves the model works, but at $10M+ per engagement with Forward Deployed Engineers. Unreachable for the long tail.

"Zenera eliminates the adoption chasm by replacing the scarcest resource in the equation — the elite agentic AI engineer — with an AI that does the same work. Every enterprise gets the equivalent of a Palantir Forward Deployed Engineer — an expert who understands their specific problem, builds a custom solution on their specific data, and deploys it to production with enterprise-grade security, accuracy, and explainability. In one day. At platform economics."

The Agentic Paradox: Why Coding Agents Succeed While Enterprise Agents Fail

One of the most instructive asymmetries in the 2026 AI landscape is the dominance of coding agents versus the stagnation of general enterprise agents.

AI coding agents — GitHub Copilot, Cursor, Claude Code — routinely exceed expectations. They generate production-quality modules in seconds, debug across codebases, and manage multi-step engineering workflows with minimal supervision. The productivity multiplier is approximately 8x: an agent generates in seconds what takes a human hours, and the review takes minutes.

Why coding works: Software engineering has a deterministic feedback loop. When an agent writes code, the compiler tells it instantly whether the output is correct. Tests pass or fail. Linters flag issues. The environment provides unambiguous, machine-readable feedback at every step.

Why enterprise fails: General business operations have no compiler. When an agent analyzes a healthcare claims dataset and produces a margin decomposition, there is no machine-readable test for “is this correct?” The feedback loop is human review — slow, expensive, inconsistent. A wrong answer that looks plausible can persist for weeks before discovery.

| Dimension | Coding Agents | Enterprise Agents |

|---|---|---|

| Feedback | Compiler, tests, linters | Human review, business outcomes |

| Feedback speed | Seconds | Days to months |

| Risk profile | Sandboxed dev environment | Real-world transactions, legal/regulatory |

| Multi-step complexity | High (autonomous modules) | Low (bounded to < 10 steps) |

| Supervision ratio | 8:1 productivity | 4:1 productivity |

"To make enterprise agents as reliable as coding agents, you need to build the equivalent of a compiler for business processes — a system that can verify agent behavior, detect errors before they reach production, and provide rapid feedback loops. This is precisely what the Zenera Meta-Agent does."

Lessons from the Market — Palantir and the Execution Thesis

Why Palantir Is the Most Instructive Success Story

While the AI industry fixated on model benchmarks and chatbot demos in 2024–2025, one company quietly became the dominant force in enterprise AI deployment: Palantir Technologies.

Palantir reported U.S. Commercial revenue growth of 137% in Q4 2025 and projections of $7.2 billion in total revenue for 2026. While Google, Microsoft, and Anthropic spent years commoditizing the “Brain” (LLMs), Palantir focused on the Execution — the plumbing, security, and operational logic required to make AI deliver measurable business outcomes in the real world.

The lesson is not Palantir's technology. It is their philosophy:

AI as Technology, Not Product

The fundamental distinction between Palantir and every other enterprise AI vendor is this: Palantir does not sell AI as a product. Palantir deploys AI as a technology embedded within the customer's operating reality.

| Dimension | The “Product” Companies (Microsoft, Google, SaaS vendors) | The “Technology” Company (Palantir) |

|---|---|---|

| Offering | AI tools, APIs, copilots | Custom operating systems for each business |

| Data philosophy | "Upload your data to our cloud" | "We bring the AI to your data, wherever it lives" |

| Integration | Pre-built connectors for popular SaaS | Deep integration with legacy systems, proprietary data |

| Support | Self-service, documentation, API support | Forward Deployed Engineers on-site |

| Outcome | Users get a tool | Customers get their problems solved |

The AIP Bootcamp GTM — “Show, Don't Tell”

Palantir replaced the traditional 18-month enterprise sales cycle with AIP Bootcamps:

- Day 1–5: The customer brings their messiest, most siloed data. Palantir's team builds a functioning "Digital Twin" of the customer's operations on their actual data.

- Day 5 result: The customer is not evaluating a brochure. They are evaluating a running system that solves a problem they thought was unsolvable.

- Conversion rate: Exceeding 70%.

This model works because it eliminates the single largest barrier to enterprise AI adoption: belief. Executives have been burned by AI vendor promises for years. They do not want slides. They want proof. Palantir gives them proof in five days.

The Forward Deployed Engineer — The Secret Weapon

The operational backbone of Palantir's model is the Forward Deployed Engineer (FDE) — elite software engineers who live at the customer's location and solve the customer's specific problems. Not generic problems. Not demo problems. The actual, messy, data-integration-nightmare problems that the customer's own IT team has failed to solve for years.

FDEs are the “human bridge” between a generic LLM and a specific business reality. They translate “We have too many empty beds” into a Python-based operational workflow connected to the hospital's EHR, staffing system, and scheduling platform.

The healthcare proof:

- HCA Healthcare: 30,000 active users. Platform manages nurse staffing by predicting patient surges and automating 90% of scheduling

- Cleveland Clinic: Reduced ER hold times by 38 minutes per patient

- NHS (UK): Enabled 80,000 additional operations by optimizing operating room block times

The Palantir Limitation — And Zenera's Opportunity

Palantir's approach works. But it has a fundamental scaling constraint: it requires an army of Forward Deployed Engineers.

Palantir can serve Fortune 500 companies willing to pay millions for custom deployments. But the long tail of enterprises — the 100,000+ mid-market and large enterprises that desperately need AI-powered operations — cannot afford Palantir's engagement model. They are trapped: too complex for Copilot, too resource-constrained for open source, too niche for point solutions, and too small for Palantir.

"This is the market Zenera was built to serve."

The Zenera Answer — An AI-Powered Agent Factory

The Core Thesis

"If the gap between AI capability and enterprise deployment is bridged by Forward Deployed Engineers, then the key to scaling across the long tail is automating the Forward Deployed Engineer."

Palantir uses human FDEs who spend weeks understanding the customer's data, building integrations, designing workflows, and deploying agent systems. This works brilliantly — at $10M+ deal sizes.

Zenera uses AI to do the same work. The Zenera Meta-Agent is an AI system that builds AI systems. It is the technological fusion of Palantir's deployment strategy and the best of AI coding agents — creating an “AI-Powered FDE” that can design, generate, test, deploy, and monitor a complete enterprise agent system in a day instead of months.

This is not a metaphor. The Meta-Agent is Zenera's GTM strategy. Palantir's most valuable asset is not their software — it is the Forward Deployed Engineer who shows up, understands the customer's chaos, and builds the solution. Zenera's most valuable asset is the Meta-Agent that does the same thing — except it scales infinitely, works around the clock, and improves with every deployment. The product and the go-to-market motion are the same thing.

The Meta-Agent: An Elite AI Engineer with a Compiler

The Meta-Agent is not a compiler. It is not a code generator. It is not a workflow builder.

The Meta-Agent is an elite agentic AI engineer — an expert in the discipline of designing, building, verifying, deploying, and operating multi-agent systems.

It possesses deep knowledge of agent architecture patterns, orchestration strategies, integration techniques, governance frameworks, and failure modes. It reasons about enterprise problems the way Palantir's best Forward Deployed Engineers do: understanding the business context, decomposing the problem into specialized agents, designing the data flows, writing the integration code, and wiring the governance.

But unlike a human FDE, the Meta-Agent also has tools that no human engineer possesses — the equivalent of a compiler, type checker, linker, test framework, and debugger for systems defined in natural language. This is the decisive advantage.

The Problem: Agentic Systems Have No Compiler

Traditional software is built in formal languages with precise semantics. Every layer has verification:

| Traditional Software | Verification |

|---|---|

| Syntax | Compiler rejects malformed code |

| Types | Type checker catches mismatches |

| Dependencies | Linker fails on unresolved references |

| Behavior | Test suites report pass/fail |

| Runtime | Stack traces pinpoint failures |

Agentic systems have none of this. They are defined in natural language — prompts, policies, handoff rules — a medium with no formal grammar, no type system, and no compiler. A prompt contradiction parses successfully. A handoff loop looks identical to a valid handoff. An agent told to “verify customer identity” with no verification tool does not error — it hallucinates. The system accepts anything and fails silently. This is why 80% of enterprise AI projects fail.

No human team can solve this at scale. A system with 15 agents has millions of possible execution paths. Prompt changes cause cascading failures three handoffs downstream. Policy conflicts between teams are invisible until production breaks. The problem is combinatorial, semantic, and non-deterministic — precisely the kind of problem that AI is better at solving than humans.

The Solution: An Elite Engineer Armed with Verification Tools

The Meta-Agent combines the reasoning of an expert agentic AI architect with the precision of automated verification:

As an elite engineer, the Meta-Agent:

- Understands the customer's business problem and decomposes it into the right agent architecture

- Selects the optimal model for each agent based on task requirements (reasoning depth, speed, cost)

- Designs data flows across enterprise systems, including legacy systems with no modern API

- Writes integration code, system prompts, tool configurations, handoff rules, and governance policies

- Generates UI components, dashboards, and approval workflows tailored to each persona

- Evolves running systems in response to natural language requests — no redeployment required

As a compiler and verification system, the Meta-Agent:

| Traditional Toolchain | Meta-Agent Equivalent |

|---|---|

| Compiler — Translates specifications into executable code | Generator — Translates natural language descriptions into complete agent systems (prompts, tools, handoffs, workflows, UI) |

| Type checker — Verifies component compatibility | Consistency analyzer — Verifies semantic compatibility across all prompts and policies |

| Linker — Resolves dependencies | Handoff resolver — Verifies all agent references resolve, all paths terminate, no infinite loops |

| Linter — Detects suspicious patterns | Policy auditor — Detects ambiguous instructions, conflicting policies, underspecified conditions |

| Test framework — Executes tests and reports failures | Trajectory simulator — Generates synthetic workloads, simulates execution paths, reports predicted failures before deployment |

| Debugger — Inspects execution state | Trajectory inspector — Replays agent executions, identifies exactly where reasoning diverged |

| CI/CD — Automates build/test/deploy | Continuous verification — Automates generation, simulation, deployment, monitoring, and autonomous improvement |

This is the combination that does not exist anywhere else in the market. Open-source frameworks give you building blocks but no engineer and no compiler. Consulting firms give you human engineers but no compiler and no scale. Palantir gives you elite human engineers but at $10M+ per engagement. Zenera gives you an elite AI engineer with a compiler — at the marginal cost of compute.

The Multiplication Effect

Palantir's FDE model works because one brilliant engineer, embedded in the customer's reality, can build what generic tools cannot. The limitation is that brilliant engineers are scarce, expensive, and serial — one FDE can only be at one customer at a time.

The Meta-Agent is a Palantir FDE that can be cloned infinitely. Every customer gets the equivalent of an elite agentic AI engineer — one who understands their industry, their data, their governance requirements — working on their problem around the clock. And unlike a human FDE, the Meta-Agent gets better with every deployment: patterns that work for one healthcare system inform the architecture for the next. Integrations that succeed at one insurance carrier become part of the standard toolchain for every carrier after.

"This is how Zenera replicates Palantir's $7.2B model without an army of human consultants: the AI is the consultant."

How It Works: From Problem to Production in One Day

Step 1: Problem Description (30 minutes)

The user describes the problem in natural language: "I need an agent system that monitors our MSK value-based contract performance, integrates with our EHR (Epic), ERP (Workday), and RCM (Cerner), parses our payer contracts, identifies margin variance by decomposing clinical variation, supply chain costs, and billing patterns, and delivers personalized analyses to our CFO, CMO, and care managers."

Step 2: Architecture Design (Generated in minutes)

The Meta-Agent decomposes this into a multi-agent system:

- Data Integration Agent — Connects to Epic, Workday, and Cerner APIs (self-coding integration for any system without an MCP server)

- Contract Analysis Agent — Parses 100+ payer contract PDFs using vision and NLP

- Clinical Variance Agent — Analyzes pathway compliance against implant utilization

- Financial Decomposition Agent — Performs margin variance analysis across supply chain, billing, and leakage dimensions

- Persona Delivery Agent — Formats output for CFO (financial), CMO (clinical), and care managers (operational)

For each agent, the Meta-Agent generates: system instructions, tool configurations, handoff rules, governance boundaries, model selection rationale, and test scenarios.

Step 3: Verification (Minutes, not months)

Before deployment, the Meta-Agent runs the agentic equivalent of a compile-and-test cycle:

- Prompt consistency check: Are any agent instructions contradictory?

- Handoff graph analysis: Do all paths terminate? Are there loops? Dead ends?

- Tool-instruction alignment: Does every agent have the tools it needs?

- Policy conflict detection: Do agents from different teams have conflicting governance rules?

- Trajectory simulation: Run 1,000 synthetic queries and measure accuracy, latency, and failure rates

Step 4: Deployment (Hours)

The verified system deploys on infrastructure the customer controls — private cloud, on-premise, air-gapped, or hybrid. Zero data egress. Full RBAC. Immutable audit logs. The system is live, running on the customer's actual data, solving their actual problem.

Step 5: Continuous Evolution (Ongoing)

The user talks to the Meta-Agent to evolve the running system: "Add a Slack notification when clinical variance exceeds 15%." The Meta-Agent regenerates the affected components, re-verifies the entire graph, and hot-swaps the updated system.

The Platform Foundation: Enterprise-Grade Infrastructure

The Meta-Agent generates agent systems that run on Zenera's production-grade platform:

Transactional Object Memory

Git-like versioning built on LakeFS over MinIO. Agents read and write to isolated branches; changes commit atomically or roll back entirely. Full lineage tracking. Multi-agent concurrency with optimistic locking. Agents transform terabytes of data with the same confidence developers have in database transactions.

Fault-Tolerant Workflow Orchestration

Powered by Temporal. Workflows persist execution state at every decision point. Automatic retries. Cross-node migration. Workflows survive Kubernetes pod restarts, node failures, and network partitions. Long-running processes pause for days awaiting human approval and resume instantly.

Self-Coding Integrations

When an agent encounters a system without an MCP server (which is 80% of enterprise systems), it reads the API documentation, generates integration code, validates it in a sandbox, and executes it. Successfully synthesized integrations are promoted to the standard toolchain. One agent integrated with a 15-year-old ERP in hours — a task that previously required months.

Event-Reactive Architecture

Agents are activated by storage events, workflow signals, external webhooks, and human interactions. Every trigger is transactionally paired with its handler. No event is lost. Runaway cascades are throttled. Circular activation chains are detected and broken.

Enterprise Governance

RBAC at platform, system, and data levels. Immutable audit trails for every agent decision, tool call, handoff, and human action. Tamper-evident cryptographic hashing. Exportable to enterprise SIEM. Configurable retention policies per regulation.

Security, Accuracy, and Explainability — By Design

Three non-negotiable requirements emerged from every customer conversation:

Security

Full data sovereignty. Zenera deploys on infrastructure the customer controls — private cloud, on-premise, or air-gapped. No telemetry phones home. No model calls to external APIs unless explicitly configured. Per-agent data boundaries. Per-user data filtering. Cross-system isolation. This is not a feature toggle — it is an architectural guarantee.

Accuracy

Generic text-to-SQL achieves 6–28% accuracy on real-world medical datasets. Zenera's approach combines parameterized APIs for auditable data access, backend pipelines for 100% accuracy regulatory components, and agentic text-to-SQL with deep domain context for 95–99% accuracy on governed queries. Every data access pattern is documented, validated, and explainable.

Explainability

For every agent decision, Zenera provides a complete explanation chain — input data, model invocation, reasoning steps, tool calls, handoff decisions, and output. Not just what the agent decided, but why. Provenance, governance context, and SQL code are displayed to end users. Regulators and auditors can trace any output to its inputs.

Go-To-Market Strategy

What We Learned from the Market

Three strategic lessons define Zenera's GTM approach:

Lesson 1: The product must satisfy the market's non-negotiable requirements.

Every enterprise buyer asks the same questions: Is my data secure? Are the answers accurate? Can I explain to regulators why the system made this decision? Can I control how agents execute from low-level tasks to end-to-end workflows? Zenera's platform was designed from the ground up to satisfy all of these requirements — not as add-ons, but as foundational architecture.

Lesson 2: Speed of deployment is the primary differentiator.

Palantir proved that demonstrating value in days, not months, converts prospects at 70%+ rates. The traditional enterprise AI deployment takes 4–6 months and a specialized team of 5+. Zenera must compress this to days. The Meta-Agent makes this possible: one person designs and deploys a production-grade system in one day. Expansion across the enterprise must be equally frictionless — new roles, new use cases, new departments — all on one platform, easily extensible, maintaining security borders throughout.

Lesson 3: Use case selection determines everything.

Not all AI use cases are created equal.

Use Case Strategy: Avoid the Crowd, Own the Complexity

The vast majority of AI venture capital flows into a narrow set of high-volume, relatively low-complexity use cases where Silicon Valley companies cluster:

| Crowded Use Cases (Avoid) | Why They're Crowded | Competitive Reality |

|---|---|---|

| Customer support / chatbots | Low integration complexity, high demo appeal | 100+ funded startups, Zendesk/Intercom incumbents, Copilot embedded |

| Ticket management / IT service desk | SaaS-friendly, structured workflows | ServiceNow, Freshworks, Atera AI Copilot |

| Sales enablement / SDR automation | CRM integration is standardized | Salesforce Einstein, Outreach, Apollo, Clay |

| Meeting summarization / note-taking | Single-system, no integration | Otter, Fireflies, Copilot in Teams |

| Content generation / marketing | Low-stakes, easy to demo | Jasper, Copy.ai, thousands of wrappers |

These markets are saturated, margins are compressing, and the winners are already determined (incumbents with distribution advantages). Competing here with a platform play is suicidal.

Zenera targets the inverse: high-complexity, high-ROI use cases in industries that Silicon Valley ignores.

| Zenera Target Use Cases | Industry | Why Competitors Can't Touch These |

|---|---|---|

| Value-based contract margin analysis | Healthcare (non-clinical ops) | Requires EHR + ERP + RCM data joins, contract PDF parsing, persona-specific delivery |

| Clinical pathway compliance auditing | Healthcare | Multi-system correlation, regulatory nuance, explainability requirement |

| Predictive TCOR benchmarking & deductible optimization | Insurance | Decade-spanning policy archives, FROI/SROI XML parsing, actuarial modeling |

| Workers' comp regulatory compliance | Insurance | 50-state jurisdictional variation, legacy XML systems, auditable reasoning |

| Engineering change management | AEC / Manufacturing | Unstructured documentation corpora, legacy CAD/BIM systems, safety-critical governance |

| Supply chain anomaly detection & corrective action | Manufacturing | Multi-vendor integration, real-time sensor data, approval workflows |

Why these use cases are transformational:

No point solution exists — There is no SaaS product that analyzes MSK value-based contract margin variance. There never will be. The use case is too specific, too integration-heavy, and too domain-dependent for a point solution to address profitably.

AI crosses roles — A single agent system may serve a CFO (financial analysis), CMO (clinical insights), and care managers (operational actions). This is not a "tool for one persona" — it is a cross-functional intelligence platform.

Very high ROI — A $3.2M margin variance recovery, a 40% reduction in regulatory compliance processing time, an 80% reduction in claims processing — these are board-level outcomes, not incremental productivity gains.

Deep moat once deployed — Once Zenera is integrated with a customer's EHR, ERP, and RCM systems, running real analyses that affect financial outcomes, the switching cost is enormous. This is not a plugin that gets cancelled after a free trial.

Target Customer Profile

Primary segment: The Underserved Enterprise

Zenera targets companies that share three defining characteristics:

They do not and will never have the skills to build their own AI agent systems.

These are healthcare systems, insurance carriers, manufacturing firms, and engineering companies. They employ clinicians, actuaries, engineers, and operators — not AI researchers. Their IT teams manage EHR migrations and network security. They cannot hire prompt engineers, ML infrastructure specialists, or agentic AI architects — the talent does not exist in their labor markets, and even if it did, they cannot compete with Silicon Valley compensation.

Their core business operations would benefit from agentic AI more than any Silicon Valley use case.

A healthcare system managing $500M in value-based contracts has more to gain from AI-powered margin analysis than a SaaS company has from AI-powered meeting summaries. An insurance carrier processing 50,000 complex claims annually has more to gain from agentic claims orchestration than a sales team has from automated email drafting. The ROI potential is asymmetrically concentrated in industries that are furthest from AI adoption.

There is no point solution for their use cases, and there never will be.

Their operational challenges are too specific, too integration-dependent, and too domain-complex for a generic SaaS tool. They need a system that understands their payer contracts, connects to their specific EHR configuration, and delivers insights in the format their CFO expects. This is, by definition, a custom solution — but one that must be deliverable at platform economics.

Secondary segment: The “Failed DIY” Enterprise

Enterprises that attempted to build agent systems with open-source frameworks and failed. They have invested 6–12 months, burned through engineering resources, and are stuck with a demo that works in controlled environments and breaks in production. Zenera offers these companies a path from a failing experiment to a deployed solution in weeks.

The Palantir Playbook, Powered by AI

Zenera's GTM motion is a direct adaptation of Palantir's AIP Bootcamp model — rebuilt for AI-era economics. The key insight: the GTM strategy is embedded in the product itself. The Meta-Agent is not a development tool that Zenera engineers use internally to build solutions faster. The Meta-Agent is what faces the customer. It is Zenera's Forward Deployed Engineer — an AI that does what Palantir's human FDEs do, but multiplied across every customer simultaneously.

Palantir's model

Send an army of Forward Deployed Engineers to the customer's site for 5 days. Build a working solution on their data. Convert at 70%+ rates.

Palantir's constraint

Each engagement requires a team of elite engineers. Minimum deal size: millions. Total addressable customers: hundreds. Palantir's brilliance is bottlenecked by the number of FDEs they can hire, train, and deploy.

Zenera's model

Send one person — a "Solution Architect" — equipped with the Meta-Agent. The Meta-Agent is the FDE. It understands the customer's problem, designs the agent architecture, writes the integration code, verifies the entire system, and deploys it — all in a single day.

Zenera's advantage: Every Zenera Solution Architect carries an infinitely scalable, continuously improving elite engineer in their pocket. This compresses the deployment cost by 10–20x, making the Palantir-style “show, don't tell” approach viable for mid-market enterprises. And because the Meta-Agent learns from every deployment, the hundredth healthcare engagement is dramatically faster and more accurate than the first.

| Dimension | Palantir | Zenera |

|---|---|---|

| Deployment team | 3–10 FDEs | 1 Solution Architect + Meta-Agent |

| Time to first value | 5 days (bootcamp) + weeks (productionization) | 1 day (design + generate + deploy) |

| Minimum deal size | $1M+ annually | Accessible to mid-market |

| Integration approach | Human reverse-engineering of legacy systems | Self-coding agents synthesize integrations |

| Scaling model | Hire more FDEs (expensive, slow) | Meta-Agent scales infinitely (marginal cost near zero) |

| Customer expansion | New FDE engagement per use case | User describes next use case → Meta-Agent generates it |

The Expansion Flywheel

The initial deployment is the wedge. The expansion model is the business:

Week 1: Deploy first use case for one team (e.g., VBC margin analysis for the CFO office).

Month 1–3: Success generates demand from adjacent teams. The CMO wants clinical quality analytics. The VP of Operations wants staffing optimization. Each new use case is generated by the Meta-Agent, deployed on the same platform, governed by the same RBAC framework.

Month 3–6: The platform becomes the enterprise AI nervous system. New departments self-serve through the Meta-Agent. IT governance teams control access, audit agent behavior, and approve new deployments — all through a single control plane.

Key architectural enabler: Every new use case runs on the shared Zenera platform with shared data infrastructure, shared governance, and shared security. This eliminates tool sprawl — the enterprise does not accumulate 200 disconnected AI tools. It has one platform, one governance model, one audit trail.

The Business Model

Revenue Architecture

Zenera's commercial model is built on a radical premise: the software is free. We charge for outcomes.

The Zenera platform — Meta-Agent, enterprise runtime, governance framework, all components — is provided at no cost. It is proprietary (not open-source), but free to deploy. Zenera earns revenue through two streams: fixed consulting rates for each engagement phase, and a percentage of the measurable business value the system creates once it goes live.

Revenue Stream 1: Consulting (Fixed Rates)

Engagements follow three phases — Pilot (1–4 weeks), Integration (4–8 weeks), and Expansion (ongoing per use case). Each phase carries a fixed consulting rate covering Solution Architect time, Meta-Agent-driven design and deployment, integration hardening, and training.

Revenue Stream 2: Value Share (% of Measured Outcomes)

When the system goes live in production, Zenera earns an agreed percentage of the measurable value created — the delta between the customer's baseline performance and post-deployment performance. Metrics, baselines, and measurement methodology are agreed jointly before go-live. Each use case has its own value measurement and value-share agreement.

| Element | Conventional AI Vendor | Zenera |

|---|---|---|

| Software | $100K–$500K/year license | Free |

| Revenue trigger | Contract signature | System creates measurable value |

| Risk allocation | Customer bears all risk | Shared — Zenera invests consulting upfront |

| Incentive alignment | Vendor profits whether customer succeeds or not | Both sides profit only when the customer wins |

| Infrastructure cost | Vendor pays (40–60% of COGS for hosting + LLM) | Customer-provided — Zenera has zero infrastructure cost |

Why this model wins:

Safety for the customer. No upfront software commitment. Pay a fixed consulting rate for the pilot; if it doesn't demonstrate value, walk away. The maximum downside is the pilot rate.

Alignment for both sides. Zenera is financially motivated to maximize every deployment's success — not to sell seats, not to upsell features, but to increase actual business value.

Zero infrastructure exposure for Zenera. All compute, storage, and LLM API costs are borne by the customer on their own infrastructure. Zenera's cost basis is purely people and IP — yielding gross margins above 90%.

The strongest possible proof point. "We pay them 15% of $3.2M they found that we didn't know existed" is the most compelling customer reference in enterprise AI.

Illustrative engagement economics (mid-size healthcare system):

| Use Case | Value Created (Annual) | Value Share (15%) |

|---|---|---|

| VBC margin variance recovery | $3.2M | $480K |

| Claims processing optimization | $2.1M | $315K |

| Regulatory compliance cost reduction | $1.9M | $285K |

| Year 1 total (3 use cases) | $7.2M | $1.08M + consulting rates |

The customer keeps ~85% of all value created. The CFO approves the next use case enthusiastically. Zenera's revenue compounds with every expansion.

For the complete pricing model, engagement phases, and commercial terms, see the companion document: Zenera Pricing & Commercial Model.

Unit Economics

The Meta-Agent is a structural cost advantage:

| Cost Element | Traditional Agent Platform | Zenera |

|---|---|---|

| Pre-sale engineering | 4–8 weeks of custom demos | 1-day deployment on customer data |

| Implementation | 4–6 month consulting engagement | 1 day (Meta-Agent generated) |

| Ongoing customization | Professional services per use case | Customer self-serves via Meta-Agent |

| Support burden | High (custom code, fragile pipelines) | Low (generated systems, verified trajectories) |

The result: dramatically lower customer acquisition costs, faster time-to-revenue, and a scalable delivery model that does not require a linear increase in headcount per customer.

Competitive Moat

Zenera's moat deepens over time across three dimensions:

Data moat

Once the platform integrates with a customer's EHR, ERP, RCM, and proprietary systems, the switching cost becomes enormous. Re-implementing these integrations with a different vendor requires months of engineering — and the customer just proved they cannot do that work themselves.

Knowledge moat

Every deployment teaches the Meta-Agent more about the domain. Agent system patterns that work for one healthcare system inform generated architectures for the next. Self-coded integrations that succeed become part of the standard toolchain. The platform becomes more capable with every customer.

Governance moat

Once the enterprise's compliance team has approved Zenera's audit trail, RBAC model, and explainability framework, migrating to a platform that lacks these capabilities is not an option — especially in regulated industries where governance re-certification can take a year.

The Opportunity — Why Now

Three Converging Forces

Force 1: Model capability has outrun deployment capability.

LLMs in 2026 can reason through any enterprise problem. They can write code, parse contracts, analyze financial data, generate SQL, and explain their reasoning. The technology is not the bottleneck. Deployment is. Zenera exists to close this gap.

Force 2: The long tail of enterprises is desperate and unserved.

88% of enterprises are using AI in at least one function. 72% are breaking even or losing money. 61% report no measurable impact. These companies are not skeptical of AI — they are failed by their current vendors. They are actively searching for a solution that works, and they will pay a premium for the first platform that delivers.

Force 3: AI can now automate the automation.

Palantir proved the deployment model. But it required an army of humans. In 2026, AI coding agents have achieved the capability to generate, test, and deploy complex software systems autonomously. The Zenera Meta-Agent applies this capability to the specific domain of agentic system engineering — creating a self-reinforcing cycle where AI builds the AI that runs the enterprise.

The Addressable Market

Immediate TAM

Healthcare operations, insurance risk management, and industrial engineering enterprises in the U.S. and Europe with over 1,000 employees that have no internal agentic AI capability. Estimated at 50,000+ enterprises.

Expansion TAM

Any enterprise in any regulated industry where core business operations require multi-system integration, domain expertise, and governance — the full long tail of the $199 billion agentic AI market.

The competitive vacuum

Silicon Valley's elite — Google, Microsoft, Anthropic, OpenAI — are focused on building models and horizontal tools. They are not sending engineers to hospital operations centers. Startups are clustered around support chatbots and sales automation. The deep enterprise — the companies that would benefit most from AI — is being systematically ignored.

Zenera fills that vacuum.

Conclusion: The Future-Proof GTM

Zenera's go-to-market strategy is built on a simple observation: the companies that need agentic AI the most are the ones least able to build it themselves.

They will never have AI engineering teams. There will never be a SaaS product for their specific operational challenges. Open-source frameworks will never deliver the security, accuracy, and explainability their regulators demand. And Palantir will never come to their door at a price they can afford.

Zenera's answer is to make the deployment model itself intelligent. By building an AI that builds AI — a Meta-Agent that serves as the compiler, debugger, and runtime optimizer for agentic systems — Zenera compresses what previously required an army of elite engineers and months of effort into a single day with a single person.

This is not a marginal improvement. It is a category shift.

The $199 billion agentic AI market will not be won by the company with the best model. It will be won by the company that solves the deployment problem — that bridges the chasm between what AI can do and what enterprises have done.

Zenera is that bridge.

See the Meta-Agent in Action

Book a session and watch the Meta-Agent design, generate, and deploy a production-grade agent system on your data — in a single day.

Request a Demo