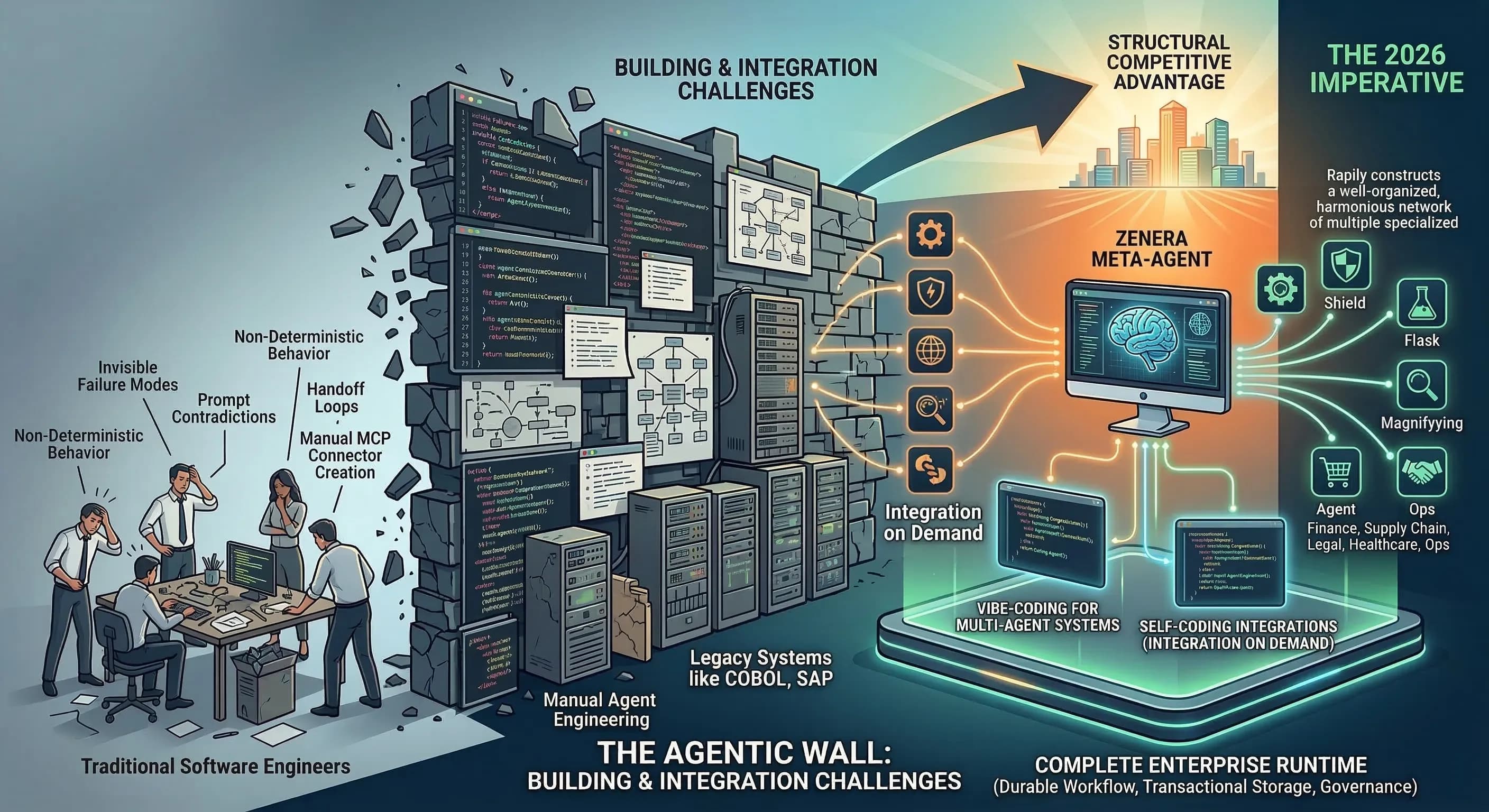

The enterprise integration problem was supposed to be solved by now. Model Context Protocol arrived in late 2024 with the promise of a universal plug-and-play standard — one protocol to connect every AI agent to every enterprise system. Two years later, the promise has aged poorly. MCP didn't eliminate the integration burden. It duplicated it.

Every internal service that once maintained a REST API now needs a second API surface: an MCP server. Every legacy ERP that barely had a REST endpoint still doesn't have one — and no one is building an MCP server for it either. The systems that need integration the most are the ones MCP helps the least.

This article proposes a different architecture: integrations as skills — where agents write, debug, and evolve their own integration logic at runtime, building an organic library of reusable capabilities that grows with every task.

I. The MCP Illusion

A Second API Surface Is Not a Solution

MCP's fundamental design mirrors what enterprises already had. Before MCP, you had REST. Now you have REST and MCP. The integration matrix didn't shrink — it doubled.

Consider a mid-size enterprise with 50 internal services:

| Before MCP | After MCP |

|---|---|

| 50 REST APIs to maintain | 50 REST APIs + 50 MCP servers to maintain |

| Custom connectors per AI tool | MCP clients per AI tool |

| Legacy systems unintegrated | Legacy systems still unintegrated |

| Total integration surfaces: 50 | Total integration surfaces: 100 |

For every modern SaaS product that ships an MCP server alongside its REST API, there are ten internal systems — custom ERPs, proprietary databases, COBOL mainframes, SOAP services from 2008 — that will never get one. MCP servers require engineering effort, ongoing maintenance, and synchronization with the underlying REST API. When the REST endpoint changes but the MCP tool description doesn't, agents hallucinate confidently — calling functions with wrong parameters, receiving unexpected responses, and producing subtly incorrect results that take weeks to trace.

Static Tools in a Dynamic World

MCP exposes a fixed menu of tools. An agent either finds what it needs on that menu, or it fails. There is no middle ground.

In practice, enterprise workflows rarely map cleanly to pre-defined tool granularity. An agent tasked with reconciling vendor invoices across SAP and a procurement database needs to: query paginated lists from both systems, join records on fuzzy-matched identifiers, apply business rules that vary by region, and write the results back transactionally. No MCP tool covers this. The agent needs five tools, custom glue logic, error handling for partial failures, and retry semantics — none of which MCP's flat tool registry can express.

The result is a familiar pattern: the demo works, the pilot stalls, and the production deployment never ships.

The Context Window Tax

A large enterprise might expose hundreds or thousands of MCP tools. Every tool comes with a name, description, and JSON schema that must be loaded into the agent's context window before it can reason about which tools to use. At scale, this creates a devastating tax:

- 150,000+ tokens consumed by tool descriptions alone — before the agent processes a single user request

- Selection accuracy collapses when similarly-named tools compete for attention

- Models freeze or hallucinate tool calls when overwhelmed by options

MCP solves the existence of a connection. It does not solve the intelligence of using it.

II. Integrations Are Not APIs. Integrations Are Skills.

Reframing the Problem

The fundamental error in the MCP worldview is treating integration as a protocol problem. It isn't. Integration is a capability problem.

A human analyst integrating data from two systems doesn't need a protocol. They need knowledge: how does this system authenticate? What endpoints exist? What data format does it return? How do I handle pagination? What are the rate limits? How do I map fields between systems? Armed with that knowledge, they write a script, test it, and save it for next time.

Zenera's architecture applies the same logic to agents. An integration is not a pre-built connector. An integration is a skill — a reusable, self-contained capability that an agent learns, validates, and retains.

Anatomy of a Skill

In Zenera, a skill is a first-class artifact with a precise structure:

A skill is not a tool definition. It is a complete, tested, versioned integration — with description for semantic discovery, authentication handled externally, implementation code that actually runs, and operational metadata that tracks reliability over time.

Skills can be as simple as a single API call or as complex as a multi-system orchestration pipeline. The agent doesn't care — it finds skills by description, executes them in a sandbox, and observes the result.

III. The Authentication Layer: Solved Once, Used Everywhere

Why Authentication Is Separated

The hardest part of enterprise integration is rarely the API call itself. It's the authentication dance: OAuth2 flows, token refresh cycles, certificate rotation, SAML federation, API key vaulting, and the cascading failures when any of these expire silently at 3 AM.

Zenera separates authentication from integration logic entirely through a managed abstraction layer built on Nango and similar providers. This layer handles:

| Responsibility | How It Works |

|---|---|

| Connection establishment | OAuth2 flows, API key storage, certificate management — configured once per external system |

| Token lifecycle | Automatic refresh, rotation, and re-authentication without agent involvement |

| Credential isolation | Agents never see raw credentials; they request tokens by provider name |

| Multi-tenant support | Different teams can have different credentials for the same system |

| Audit trail | Every token request is logged with agent identity, timestamp, and target system |

From the agent's perspective, authentication is a single function call:

token = get_token("salesforce-prod")The agent doesn't know or care whether this is OAuth2 with PKCE, a rotated API key, or a federated SAML assertion. The authentication layer manages the complexity. The agent focuses on the integration logic.

This separation is critical because it means self-coded integrations inherit enterprise-grade authentication automatically. An agent writing a new integration from scratch doesn't need to implement OAuth flows — it calls get_token(), gets a valid bearer token, and writes the business logic.

IV. Self-Coding Integration: How Agents Build Their Own Skills

The Lifecycle of a Self-Coded Skill

When an agent encounters a task that requires connecting to a system it hasn't used before, the following sequence unfolds:

Bootstrap Skills: The Starting Point

Self-coding doesn't start from zero. Zenera uses bootstrap skills — minimal descriptors that give agents a starting foothold for a target system:

A bootstrap skill says: "This system exists. Here's where to learn about it. Here's how to authenticate. The rest is up to you."

From this seed, agents generate increasingly specialized skills: one for querying incident tickets, another for updating CMDB records, another for running complex aggregation reports. Each skill is a refined, tested artifact — not a generic tool definition, but a proven piece of integration code with a track record.

Meta-Agent Generated Skills

Not all skills originate at runtime. Zenera's Meta-Agent — the AI-native IDE assistant that designs multi-agent systems — can pre-generate skills during agent architecture design:

The Meta-Agent reads API documentation, generates the integration code, runs tests in a sandbox, and commits the skills to the library — before the agent system is even deployed. This means agents start their operational life with a pre-built skill set that they continue to expand at runtime.

V. Where MCP Fails, Skills Succeed

Complex Multi-System Integrations

The decisive advantage of skills over MCP tools is composability. MCP tools are atomic — each tool does one thing. Real enterprise integrations are not atomic.

Consider a quarterly financial reconciliation that requires:

- Fetch all invoices from SAP for the quarter (paginated, 50,000+ records)

- Fetch corresponding purchase orders from a procurement database

- Fuzzy-match invoices to POs on vendor name, amount, and date range

- Store intermediate results in transactional LakeFS storage

- Apply regional tax rules from a compliance microservice

- Generate a discrepancy report

- Post the report to the finance team's Slack channel

- Update status flags in both source systems

With MCP, this requires eight separate tools, a complex orchestration layer to manage the flow, manual error handling for partial failures, and hope that no tool's interface changes between steps. With a skill, this is one artifact:

The skill encapsulates the entire workflow — including pagination, fuzzy matching, transactional storage on LakeFS, error handling, and cross-system updates — in a single, tested, versioned artifact. The agent calls one skill instead of orchestrating eight tools.

Adaptive to Change

When an API changes, MCP servers break. Someone must update the tool definition, redeploy the server, and hope nothing else depended on the old schema. This maintenance burden scales linearly with the number of integrations.

Skills adapt. When a self-coded skill encounters an unexpected response — a changed field name, a new pagination format, a deprecated endpoint — the agent detects the failure, re-reads the API documentation, regenerates the broken portion of the skill, tests it, and saves the updated version. The integration heals itself.

Context Window Efficiency

MCP's approach to tool discovery is brute-force: load every tool description into the context window and let the model figure it out. With hundreds of tools, this wastes tokens, slows inference, and degrades selection accuracy.

Skills solve this with semantic search over descriptions. When an agent needs to interact with an external system, it searches the skill library by natural language description — not by scanning a flat list of tool schemas. Only the relevant skill's description and interface are loaded into context. The implementation code executes externally; it never enters the context window at all.

| MCP | Skills | |

|---|---|---|

| Discovery | Load all tool schemas into context | Semantic search over descriptions |

| Context cost | O(n) — grows with every tool | O(1) — only matched skill loaded |

| Execution | Model generates tool call JSON inline | Skill code runs externally in sandbox |

| Tokens consumed | 150,000+ for large registries | < 500 for skill description + params |

VI. The Integration Hierarchy

Zenera does not reject MCP. It positions MCP as one layer in a hierarchy of integration strategies, each suited to different enterprise realities:

The hierarchy is not exclusive. A single agent might use an MCP tool for Slack notifications, a managed Nango connection for Salesforce authentication, and a self-coded skill for a legacy inventory database — all in the same workflow. The agent doesn't need to know which layer is in play. It searches for skills, finds them, and executes.

VII. The Organic Growth of an Integration Library

From Zero to Enterprise Coverage

The most profound consequence of the skills architecture is what happens over time. Every task an agent completes that involves an external system potentially creates a new reusable integration. The skill library grows organically — without a dedicated integration engineering team, without MCP server development projects, without vendor-managed connector marketplaces.

The Feedback Loop

Skills that are used frequently get battle-tested through repetition. Each invocation provides data: latency, success rate, error patterns. Skills that fail get forked and improved. Skills that are never used get archived. The library self-optimizes — not because someone manages it, but because usage is the selection mechanism.

This is the opposite of MCP's model, where tool quality depends entirely on the server maintainer. If an MCP server's maintainer deprioritizes updates, every agent using that server suffers. With skills, the agents themselves are the maintainers.

VIII. Forward-Thinking: Integration Is an Intelligence Problem

The End of the Connector Paradigm

The history of enterprise integration is a history of connectors: ODBC drivers, ESB adapters, ETL pipelines, REST clients, GraphQL resolvers, and now MCP servers. Every era produces a new connector standard. Every standard promises universality. None delivers it, because the problem is not the connector — the problem is the assumption that integration can be pre-built.

Enterprise systems are too numerous, too varied, too idiosyncratic, and too unstable for any fixed set of connectors to provide complete coverage. The moment you finish building connectors for your current systems, new systems arrive, old systems change, and acquisitions bring entirely foreign technology stacks.

Integration should not be a manufacturing problem — building connectors on an assembly line. Integration should be an intelligence problem — understanding systems and writing the glue code that connects them.

The Agent-Native Future

The logical endpoint of the skills architecture is an enterprise where:

- Integration is not a project — it's a byproduct of agents doing their work

- No integration team is needed for routine connections — agents handle it themselves

- Complex integrations are designed by the Meta-Agent at architecture time, not hand-coded by engineers

- The integration library is alive — growing, healing, optimizing, and deprecating itself based on real production usage

- MCP is one input among many — useful when available, unnecessary when not

- Legacy systems are first-class citizens — because the agent reads the docs and writes the code, just like a human would

This is not a theoretical future. It is the architecture Zenera ships today. Every self-coded integration that succeeds is one fewer connector that needs to be built, maintained, documented, and eventually deprecated. Every skill that evolves through production use is one more step toward an enterprise where the systems themselves teach the agents how to talk to them.

Conclusion

MCP was a reasonable first step toward standardizing agent-tool communication. But it was a first step built on a last-generation assumption: that integration is a protocol problem requiring pre-built connectors. In reality, enterprise integration is an intelligence problem — and the solution is to let agents apply intelligence to it directly.

Skills are the unit of integration in an agentic world. They are generated, not configured. They are tested, not trusted. They evolve, not stagnate. And they grow with every task an agent completes.

The enterprises that will move fastest in the agentic era won't be the ones with the most MCP servers. They'll be the ones whose agents can learn to talk to any system, on demand, without waiting for someone to build a connector.